EVALUATION IN A SYSTEMS PERSPECTIVE OF PLANNING

Thorbjoern Mann October 2020

Note: This is a section of a larger forthcoming study on evaluation in the planning discourse, for discussion in a systems community, since it raises some questions about systems perspective.

Systems perspectives of planning

In just about any discourse about improving approaches to planning and policy-making, we find claims containing reference to systems: ‘Systems Approach’, the‘ Systems Perspective’, ‘Systems Thinking’, ‘Systems modeling and simulation’, the need to understand ‘the whole system’, the counterintuitive behavior of systems. Systems thinking as a mental framework is described as ‘humanity’s currently best tool for dealing with its problems and challenges’. By now, there are so many variations, sub-disciplines, approaches and techniques, different definitions of ‘system’ and corresponding approaches on the academic as well as the practitioners’ consulting market, that a comprehensive description of this field would become a book-length project.

The focus here is a much narrower issue: the relationship between the ‘systems perspective’ (for convenience, this term will be used here to refer to the entire field) and evaluation tasks in the planning process. It is triggered to some extent by an impression that many ‘systems-perspective’ approaches seem to marginalize or even entirely avoid evaluation. This study will necessarily be quite general, not doing adequate justice to many specific brands of systems theory and practice. However, even this brief look at the subject from the planning / evaluation perspective will identify some issues that call for more discussion.

Systems–related practice might even be seen as a synonym for ‘planning’ since both involve similar general aims: to develop ideas for effective action to intervene in what both call ‘context’ of perceived real situations, to change or ‘transform’ those situations. ‘Context’ is one of only a few shared terms for the same concepts; the vocabulary of the different ‘perspectives’ is full of different names for what essentially are the same things. This especially applies to the facet of evaluation. So it seems appropriate to look at the question of the role of evaluation in a systems-perspective-guided model of planning.

The role of evaluation in a systems model of planning

Would systematic approach to this question require a common, well-articulated and sufficiently detailed ‘systems model’ of planning, against which concepts and expectations of evaluation (such as those explored in other sections of this study) could be matched? There are many general definitions of ‘system’ and ‘planning’ and related concepts such as ‘rational planning’, but few if any of these are sufficiently specific for the purpose of this investigation. There are many more detailed ‘models of planning’ — not necessarily based on a well defined overall ‘systems’ perspective, but in detail aimed at specific domains. This introduces too many considerations applicable to the respective fields to constitute a useful general ‘systems model of planning’. The development of such a general model is beyond the scope of this project. As a first ‘stopgap’ approach for the purpose of this study, a few selected common ‘systems perspective’ terms, tenets, and assumptions will be examined and their implications for evaluation explored.

A common view of ‘system’ is that of a collection of elements, entities connected into a coherent ‘whole’. Thus, any such set of entities and relationships can be seen as a system. (The term ‘can be seen as…’ means that the choice of declaring something as a system is taking place in somebody’s mind, whether and how well that selection reflects ‘reality’ is a different issue – since the mind can also hold conceptions of entirely imaginary entities that ‘exist’ – that is, whose ‘reality is not anywhere out there in what we call ‘reality’, — only in someone’s mind, an entity whose ‘reality’ itself is ardently disputed by many thinkers.

To begin a discussion about what might be an appropriate set of system components and relationships for a general perspective of ‘planning’ raises a question: what kinds f ‘components’ and ‘relationships’. The frame might focus on the kinds and roles of agents involved in planning, or on procedural steps and rules, for example, or on the different forms of information exchanges between the parties. The following discussion will use a relatively common framework, that of activities related to objects — variables distinguished as belonging to phases concerned with ‘context’, ‘solution development’, ‘performance’, ‘decision’ and ‘implementation.

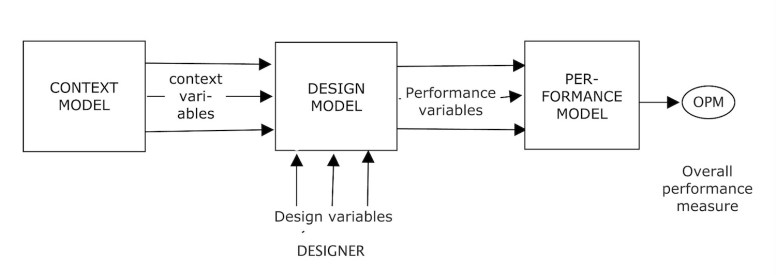

Diagrams of the planning system described in this way -for example Rittel’s ‘design as a system’ diagram, (figure 1) distinguish the planning or design activity from the overall ‘context’ or ‘environment’ – everything else ‘out there’. The diagram shows ‘context model’, (it is not clear whether the model refers to ‘everything out there or just those context aspects pertinent to the project ), ‘design model’ and ‘evaluation model’ as three clearly separate boxes. The ‘designer’ — shown outside of the ‘design model’– must anticipate the values of ‘context’ variables (defined as influences on the project that the designer cannot control) manipulating ‘design variables’ (the designer feel can be controlled, manipulated) in the ‘design’ box, where the ‘input’ of context and design variables generate ‘outputs’ of ‘performance variables’ that are then aggregated in the evaluation box into an ‘overall performance judgment’. The designer considers various setting of design variables, until a satisfactory value of an overall performance judgment is achieved.

Figure 1: Rittel’s systems model of design [1]

A common systems community mantra is that ‘everything is connected to everything else.’ Thus, according to the understanding of ‘system’, what we call ‘reality’ of everything – the world or universe: the ‘context’ of the design or planning activity — is a system, though we never ‘know’ more than a small part of it. It includes the entities and agents — the ‘designer, planner, systems modeling expert themselves involved in or affected by the problem the plan aims at remedying — in some way. It also includes the variables the designer has decided can be manipulated, now calling those ‘design variables’. Finding out, for example, that initial assumptions about which variables can be controlled and which cannot, are mistaken: some forces assumed to be ‘controllable’ turn out not to be, while a ‘creative’ mind may find surprising ways to manipulate ‘context’ variables so as to become ‘design variables? Already, the distinction between the boxes in a more detailed systems diagram will become fuzzy. The ‘context’ is really the background within which design and assessment and decision takes place. So should a ‘systems diagram’ of planning show the design and evaluation components as parts within that infinite background?

Designers make distinctions among the entities they are aware of. The very perception of context entities as potential candidates for consideration as relevant components of the planning system is based on properties of those entities, and the strength of different types of connections, relationships, acting on their perception and being ‘cognized’ and ‘re-cognized’ by the perceiving ‘mind’. So must the designers’ minds have something like a ‘perception record’ – a picture or ‘model’ in their own set of properties? Is it meaningful to say that a person’s knowledge is the model of the world they are aware of, in that person’s mind? (The model can then have things in it – entire worlds — that are merely ‘imagined’ but are not actually in ‘reality’ but only as the imaginations of people… This creates the ‘Santa Claus’ phenomenon – imaginary entities, for which actual ‘real’ models are manufactured which now become part of actual reality.)

We also already make judgments about properties and relationships of context entities. Whereas the properties can be observed and measured in reality, the judgments are emerging and exist only in the observers’ mind (until shared with others): ‘this item is pertinent to the project’ or “this relationship is significant, so it should be included in the model”. So far, the judgments may be generally related to whether the elements and relationships in the model are ‘true’ – that they correspond to the actual reality out there and how it works. Roughly speaking, that is the aim of ‘science’, and it is important because we recognize (via repeated experience of making assumptions about reality that turn out to be mistaken) that the model is not necessarily an accurate representation of the real world, as the systems warning ‘The map is not the territory’ tries to remind us. The task of becoming aware of and ‘understanding’ the pertinent context is challenging enough to give it a prominent position and priority in the systems perspective of planning. But it is important to recognize that it already involves judgments that are no longer ‘just the facts’, but assessments of significance related to the purpose of the project.

To the extent systems ‘understanding’ is related to planning, transformation of existing conditions, yet another set of insights and assumptions is needed: The recognition that the world – the context – is changing, whether we do anything about it or not. More specifically: some entities in the context change (the ‘variables’ that describe their properties take on different values), and some appear to be constant, unchanging. The model of the planning system for the project at hand must account for that distinction: the context entities that produce the changes in the context variables for the design must be found and understood (their properties and relationships identified), to develop an adequate model pertinent to the purpose of the activity: to change an unsatisfactory condition, to create a new subsystem, to remedy a ‘problem’. All this involves not just ‘information’ but evaluation judgments.

The systems recommendation to ‘understand the system’ therefore cannot refer only to the context subsystem that relates to the problem. It tries to understand and explain how the elements of the system led to (most commonly ‘cause’) the current ‘IS-state’ of the context that somebody considers ‘Not as it OUGHT to be” (a definition of ‘problem’) and hopes to find out ‘HOW’ the condition can be changed into the OUGHT state. The ‘cause-effect’ relationship assumed in most models constitutes a paradoxical judgment challenge for approaches urging the planner to identify the ‘root cause’ of a problem since it seems obvious that removing that cause will resolve the problem. Does this not require an assumption that this root cause is not itself the product or effect of another cause – which seems to be at odds with the very concept of cause and the notion that the plan can remove (un-cause’) it? How far along the causal chain must we look to find that cause that does not have a cause? Or jump to a judgment that any causal relationships into a cause are so weak and insignificant to justify its determination as the ‘root’ of the problem relationships?

So far, all this is still a sketchy account of just the early stages of planning. Much systems work is focused on the important question of how the initial system will change over time – first, if nothing is done to change it – but then, how it will change in response to what might be done to change it: interventions, plans. ‘Understanding the system’ now means ‘understanding how the system’s behavior is changing over time’. That task has proven to become more challenging – ‘complex’, ‘nonlinear’, ‘counterintuitive’, the larger the network of relationships, and the more (forward and backward) ‘loops’ occur in the network. This is where the contribution of the systems perspective has been most significant, facilitated by the advances in computing technology.

It may be important to consider the distinctions and assumption judgments that are needed to develop a successful simulation model to predict the behavior of a given system over time: the system components, their properties, the variables and their measurement provisions for establishing ‘objective’ descriptions of the states and changes, the relationship functions expressing how the state of a given entity is influenced by the states and state changes in other components. In all these choices, model makers are making many ‘subjective’ judgments. The determination or selection of components to include in the model will be based on some consideration of the strength of relationships between components. The ‘boundary’ of the system is located at the ‘last’ or outermost components of the relationship network that a person considers significant. The boundary will therefore be different for different people. While relationship strength can perhaps be measured ‘objectively’ for past and current states, the judgment about what strength is sufficiently significant for an entity at the edge of the network to be included in the network is not objective. Likewise, the predictions of likelihood of the significance of future changes are entirely subjective judgments.

The selection of ‘initial settings’ of a simulation model run corresponds to the selection of ‘design variables’ in Rittel’s model (as well as what many similar planning or ‘rational’ decision-making models for specific domains describe as the phase of ‘generating plan alternatives’). Those decisions are based on consideration of system components judged to be able to be relatively feasibly changed by applying appropriate actions and resources: interventions. These ‘leverage points’ would still be considered ‘context’ if the designer’s knowledge model does not contain relationship representations judged to offer feasible intervention opportunity.

Simulation of model behavior over time (assumed or hoped to be sufficiently close to the behavior of the ‘real’ system) is part of the quest for ‘understanding the system’. Their ’output’ is a set of tracks of selected descriptive system variables that describe the system. The early phase runs will be based on the option of ‘doing nothing’ – to see how ‘bad’ the future system states will be. The seriousness of the ‘problem’ (that justifies ‘doing something’) is assessed by the peaks or valleys of the tracks of the system variables. These variables — selected as significant descriptors of the system – may correspond to ‘criteria’ as understood in the discussion of section [..] The point is that they are already taken as ‘performance’ variables but without making the judgment or evaluation nature of this step explicit. In simple linear planning models, evaluation is something to be done after the ‘plan’ alternatives, consisting of interventions to change some of the ‘current state’ variable values of the model, to see how the peaks and valleys change, have been articulated for decision.. But again, ‘evaluation’ activities are seen from the first attempts to ‘understand the context and system behavior’, extended seamlessly into the ‘solution generation’ phase.

A problematic aspect here would be for this to be taken as the only or even just main performance assessment needed: leading to the temptation to pick that solution alternative showing the most impressive peaks or valleys of the simulation tracks. This concern has of course led to suggestions to change the planning model from the linear sequence to a cyclical or spiral one of repeated cycling through the several stages until one solution seems to emerge as the ‘best’ one.

The concern remains that the predicted descriptive system variables become the main basis for the decision. The flaw lies in constraining the perspective to these ‘objective’ features, ignoring the possibility of differences of opinions. Not only about the probability of fact-assumptions going into the model structure, but more importantly about judgments about desirability, goodness, value of the ‘whole picture’ of the plan: comprehensive evaluation is not part of the model. Simulation track diagrams give the impression that all such differences and controversies have somehow been ‘settled’, when they simply have been omitted from consideration. When attempts of more comprehensive evaluation – e.g. ‘Benefit-Cost Analysis’ — are included, the differences of opinion of different parties being affected by the problem and the proposed solutions are made to disappear by being aggregated into ‘common good’ type measures, for example Gross Social Product, growth rates, or polling results.

Briefly, what this means, — even for the simple assumption of a single designer working on a personal planning issue, — already the ‘perspective’ of the first stage of ‘understanding the system’ will include considerations of context, design (leverage point) and performance: so should a ‘model’ of the planning system more visibly and explicitly account for all of this, to meet both systems and evaluation expectations?

Acknowledging the roles of the many parties involved in planning projects

As the perspective of planning activities here has become more complex, aspects such as ‘differences of opinion’ have crept into the discussion. But the diagrams still look like the ‘perspective’ of a single person – initiator of the process by raising a problem or issue, designer, developer of the model, ‘client’ of the investigation effort, decision-maker — all in one. In actual planning projects all these roles will be real people or parties. And they will all have different ‘perspectives’ of the problem, the project, and plan proposals. Must the overall model not acknowledge that each party involved in a planning project will come to the table with a different perspective: view of the situation, assessment of components and relationships and their significance, and, above all, different views and judgments regarding what is a ‘good’ plan and outcome? Obviously, a realistic model of the planning system (not only the system to be planned but the planning process itself), must take all these roles into account. If there is to be a collective decision ‘on behalf of all affected parties’ or for entire communities, society as a whole: shouldn’t the question or task of how that decision can be forged from all those perspectives into one justifiable story be a distinct part of the systems model of planning?

Is the assumption that there ‘is’ an achievable single valid general perspective even for the ‘objective’ aspects requiring due consideration an unlikely, wishful-thinking one? Many approaches seem to make it appear that the eventual decision will be ‘rationally’ based on such a common perspective. Can we reasonably expect that the ‘approach’ of a planning process will result in all participants sharing the same perspective? Does the slogan ‘planning decisions should be based on the facts’ express the position that a commonly shared perspective can be achieved only for the ‘factual’ aspects of the information involved – and that these – only these – should determine the decision?

Looking at another common slogan that begins to acknowledge differences of opinion: “Planning decision should be based on the merit of arguments”. (The ‘pros and cons’ about a proposal). Does this expectation or demand assume that the issues about the pro and con arguments can be ‘settled’ to determine the decision? (If ‘settling’ means everybody adopting a common perspective). Information systems (e.g. IBIS: Issue Based Information Systems, and APIS: ‘Argumentative Planning Information Systems) based on the Argumentative Model of planning, as well as the procedural recommendations for collective planning processes drawn from the model, aim at inviting and bringing all the information that could become part of solution designs and arguments about them to the attention of the designers and decision-makers. The attempt to devise procedures for the evaluation of the arguments we routinely use in planning will quickly show that those ‘planning arguments’ [4] contain not only factual but also deontic (OUGHT) premises. Logic asserts that their deontic conclusions (‘Plan A ought to be adopted’) cannot be derived from facts alone: ’Planning arguments’ are not ‘deductively valid’, from a formal logic point of view – though they are used in various disguised forms – all the time in planning and policy-making. And planning decisions do not rest on single ‘clinching’ arguments but on ‘weighing’ of the whole set of ‘pro’ and ‘con arguments. So should the systems planning model include all these, including the plausibility, quality, significance assessments (perspectives) of all the parties involved? And how should it describe the way all these considerations will or should guide the decisions? Most of these issues still need to be investigated and discussed more thoroughly, before they can become general accepted ‘practice’.

The role of creativity and imagination in a systems model of planning

As if the difficulties discussed so far are not challenging enough, there is an underlying sense of ‘something missing’ in the picture of systems model of planning: the issue of creativity. If the systems models of the solution generation phase (whether in a linear or cyclical version) are built from the ‘objective fact’ information in the context, does creative activity merely consist of inventing new combinations of knowledge items (as some definitions of design actually are suggesting, and techniques such as Brainstorming are based upon?) Where do truly surprising, innovative ideas come from, how do they fit into the systems model of design and planning?

Going back to the ‘perspective’ image of context knowledge, a person’s ‘model’ of a situation was describing context information as coming to a perceiving entity through a ‘screen’ (like the glass panes the Renaissance painters who invented perspective used to trace the image of a scene: the very term ‘perspective’ means ‘seen through’). The emerging picture of points and connections seen through the glass began to look like the real scene.

But then the artists added new items to the pictures — figures of people they may have studied and drawn on different ‘screens’ — but also entirely ‘imagined’ entities: angels, fairytale heroes, villains, sorcerers, monsters, and imagined sceneries and landscapes. Just harmless, ‘not real’ fairytale illustrations? When communicated, shown to others, these stories and sceneries come to viewers and listeners as ‘real’ context items. This created ‘Santa Claus’ problems of understanding the difference between ‘real reality’ and its models, and models of imagined reality, which now were communicated to others and began to have very real effects in the real world. So description of the IS/OUGHT discrepancy of a perceived problem as the difference between the variable value of an ‘objective’ property of a context entity and the perceivers opinion that a different value of that variable would be ‘better’ or more desirable does not capture the phenomenon of imagination of entities not (yet) found in objective reality. Doesn’t true designers’ creativity lie in just this kind of imagination?

Without going into the question of how such creative imagery comes about in designers (and other people’s) minds, can we say that a person’s ‘perspective’ should the seen as the combined picture of ‘real context’ representation a n d all the creatures populating the persons’ imagination? To the extent imagination plays a significant role in creative solution development, it would seem necessary. The question here is then about how systems or other models of planning should accommodate the phenomenon. Taking the call for understanding the ‘whole’ system seriously, does this now mean that the planning process must consider all perspectives of all affected and involved parties in a planning project?

Do the difficulties of answering these questions explain the tendency of many ‘brands’ of systems approaches to marginalize or entirely avoid the task of explicit, thorough evaluation? For a comprehensive model of planning to at least account for the presence of these problems, it may be useful to examine the different patterns of this tendency on some detail.

Tendencies to marginalize evaluation in systems practice

The general part of some planning approaches may seem to give due recognition of the different phases including evaluation, and assign equal attention to each phase. For example, the list of phases of the ‘Rational Planning Model’ [2] which adds two more practical stages to the three boxes of Rittel’s model, (I would suggest adding at least ‘decision to implement’ to the list) appears to consider each of the phases as equally deserving attention:

“Definition of the problems and/or goals;

Identification of alternative plans/policies;

Evaluation of alternative plans/policies;

Implementation of plans/policies;

Monitoring of effects of plans/policies.”

However, many accounts of consultant brands of planning on social media give a different impression: their ‘systems models‘ of planning focus on ‘early’ phases (understanding the system’ and ‘developing solutions’) while marginalizing or entirely avoiding engagement in thorough ‘systematic’ evaluation procedures. The reasons may range from practical concerns about their burdensome complexity, to aims of avoiding the impression of substantial differences of opinions, a general need to produce ‘positive’ success stories ideally resulting in consensus-supported decision recommendations (leaving the responsibility for actual decision to the ‘clients’ of the project). Even Rittel, who sought to develop a better ‘second generation planning approach with his ‘Argumentative Model of Planning’ [1] guiding his proposals for ‘Argumentative Planning Information Systems’ that pointedly focused on the aspects of differences of opinion and perspectives – issues – did not have time before his untimely early death, to offer a more coherent model of evaluation of the merit of arguments in the planning discourse.

This tendency should be seen as different from a traditional practice that also avoids elaborate evaluation: that of starting from an existing ‘solution’ that is recognized as the ‘current best’ response to a known planning task – for example a house or a car. Such a design or planning project is not seen as a new, unprecedented problem, but to add an improvement to the precedent solution. The improvement involves only one or a few parts or aspects, (sometimes just an aesthetic one) and the project is considered successful if the difference is actually perceived by the public or client as an improvement, so no comprehensive evaluation effort is needed. While this is a quite common and respected practice – all automobile manufacturers offer new, ‘improved’ models every year; architecture students are required to start every new design assignment with a study of precedents, — it is usually not claimed to be a ‘systems’ approach since that would require looking at all parts of the ‘whole system’.

While the attitudes of systems consulting are understandable – given the pressures of competition in securing project contracts, financial and time constraints – these trends are arguably not living up to systems-perspective claims of superiority over other approaches, nor the evaluation expectations sketched out in this study. Especially the connection between evaluation results and the eventual decision often remains unresolved: too many decisions end up being made by decision modes and practices that blatantly ignore the evaluation insights or avoid evaluation altogether. A brief indication of some of the tactics used for this may be useful to guide more research and development on this problem.

Several kinds of ‘tactics’ for this ‘evaluation evasion tendency’ can be distinguished: One is the tendency discussed above: to consider the performance variable tracks of simulation models with highly aggregated data into ‘common good’ measures as already sufficient to support decisions. This may eliminate many other evaluation aspects from consideration, and hide any opinion differences from sight. The ‘Root Cause’ approach is a variation of this tactic: focusing the effort on identifying the ‘root cause’ of a problem, for which there are few or only one way to ‘remove’ it, will greatly simplify the work.

The approach of sequential narrowing down the ‘solution space’, by eliminating all solutions that seem unsatisfactory on some significant evaluation aspect, to only one remaining solution for which there then is ‘no alternative’ is a more general variation of this tactic.

Reliance on traditional ‘best practice rules’ is another tactic, one that may be labeled as ‘generating single solutions by following rules’. Original trade guilds’ manuals and secrets became elaborate systems of government regulations: designing a plan by systematically observing and following all the regulations at least would ensure the plan getting a permit – a statement of ‘good enough’ but arguably far from helping the resulting plans or buildings to become desirable, good, or beautiful. Regulations typically prescribe ‘minimal’ conditions for permitting and so tend to generate barely tolerable results – a criticism often heard about the environments of architectural and modern urban design.

Theories about ‘good design and planning can play a similar role: In architectural and urban design, theories (such as the Pattern Language) prescribe or recommend the generation of plans by combining ‘valid’ components and rules into single solutions whose validity and quality is guaranteed by the method of generating them – no additional evaluation effort is needed.

The same result can be achieved by procedural means. Small planning teams, even with variations of user or citizen participation, facilitated by a moderator of the consulting firm hired for the project, and working under tight resource and time constraints as well as psychological pressure – competition, the fact that team members are employees of the ‘client’ company and/or the consultant firm, emphasis on achieving consensus, rewarding ‘cooperation’ and subtly penalizing critical comments (‘attitude’) about flaws in emerging group solutions all aim at generating single solution products. If dissenting discussion persists, or when the assembled team shows signs of fatigue, procedural moderation tools such as the “Any more objections? – so decided!” statement by the facilitator can produce ‘consent’ presented as ‘consensus.

Finally, the increasing calls must be mentioned for planning to pay attention to ‘crowd wisdom’ or ‘swarm behavior’ as approaches generating ‘single’ solutions whose validity is guaranteed by the crowd or swarm, and therefore does not require any systematic evaluation efforts. The obvious questions for investigating the validity of such approaches as serious replacement for more thorough and ‘systematic’ planning and policy-making seem to run afoul of the very principle assumptions of such approaches: that this would be tantamount to ‘evaluation’ to which crowd wisdom is inherently superior and thus makes it unnecessary, even detrimental. Some serious discussion and perhaps comparative experiments seem appropriate to find a meaningful answer to these questions.

“Wrong question?”

The reasons for evaluation avoidance may superficially look like somewhat illegitimate ‘shortcuts’ or ‘excuses’ not to have to engage in cumbersome evaluation procedures. But they might also be based on some underlying concerns that should be looked at, in the spirit of both the systems mantra to consider the ‘whole picture’ as well as the ‘wrong question?’ advice to question the basic assumptions of any project.

Some such concerns might be related to the role of large complex planning projects, given the inevitable power relations in society: Can the very sophistication and complexity of the systems perspective, and especially the evaluation procedures relayed to it, be used as tools of domination and oppression, even as their proponents embrace ‘participation’ and the aim of giving due consideration to ‘all’ expressed opinions and concerns? Or can their apparent inclusiveness of all opinions serve as legitimization devices for avoiding responsibility for making decisions (and facing ‘accountability’ questions for questionable results? Phenomena like the ‘plausibility paradox’ finding of argument evaluation efforts that seem to tend towards the center point of zero (on a +pl/-pl plausibility scale the more arguments are assessed and honest plausibility/probability estimates are applied [section … of the study] seem to support such skepticism.

The very examination of the evaluation task itself can lead to impressions of futility of the effort: Are the opinions and judgments that must be made and aggregated into decision guides so subjectively individual, mired in temporal situation conditions and based on incomplete, insufficiently reliable ‘evidence’ support, (support coming in ‘inconclusive’ argument patterns) — that they never should be used as the basis for plans for large projects that will have consequences way beyond individual’s ‘planning horizons’ and ability to correct mistakes? Is it more meaningful to look for more timeless, universal, general principles tested by experience in many applications over time? There are several theories trying to offer such timeless truths, whose support ranges from personal preferences of the theorist and their followers, to connections (e.g. in architecture) to mathematics and geometry. (Of course, even such theories have been accused of becoming tools of cultural dominance and oppression.)

Theories drawing on insights from evolution studies and complexity theories suggesting that valid solutions can ’emerge’ from many small acts coalescing into ‘crowd’ behavior based on ‘crowd wisdom’ — instead of being based on grandiose plans implemented by power entities in hierarchical organizational structures. Groups investigating these issues should be encouraged but seem to resist considerations of questions such as what to do about different crowds behaving very differently and thus getting in each others’ ways (lemmings seem to get in their own self-destructive way… which might be a warning to keep in mind.)

These few examples must suffice to convey the need for taking such attitudes seriously and establish a constructive discourse about their applicability to the different forms of plans and responses to challenges.

Summary and suggestions from the investigation.

This brief exploration raised many questions and exposed areas about systems and evaluation that arguably need more work: thinking, research and experiments. But there may be some findings and perhaps lessons that could guide immediate change of perspectives and application patterns.

Does the insight that evaluation judgments are made in all phases’ of systems-guided planning suggest some revisions, even reconstruction, in some prevailing systems models of planning? For example, should systems perspective promotions consider and include the following aspects more clearly?

* Systems models are not reality — a well-known mantra – but representations of people’s selective and incomplete perspectives of parts of ‘reality’. Especially different people and parties in the planning process will have significantly different models in their minds. That is, the single systems model of a project gives a misleading impression that a common understanding of the problem, situation, solution has been achieved?

* Encouraging efforts to coordinate the vocabulary and focus of evaluation judgments made at different ‘stages’ of the process – early judgments (often not really acknowledged and stated explicitly as such) can eliminate important concerns from subsequent work?

* Should visual representations of both the process and the system being worked on – systems diagrams – be expanded to more explicitly include evaluation and the agents making evaluation judgments?

* Should systems models of planning that inadvertently or intentionally represent the process as a linear sequence be replaced by recursive or ‘parallel processing’ representations?

* Are efforts to provide a clearer understanding of distinctions between ‘descriptive’ plan features and ‘evaluative’ judgments? Specifically, should the practice of showing ‘optimal’ behavior of a system over time with descriptive measures as the suggested basis for decisions be changed to complement the ‘objective’ measures with corresponding evaluation judgments, admittedly on the risk that this will reveal significant differences of opinion?

* Should the tendency of deliberately or inadvertently encouraging ‘single-aspect’ decisions be resisted in favor of considering the ‘whole picture of evaluation concerns?

* When addressing social issues, should systems practitioners stop claiming that their ‘brand’ approach will ‘optimize’ consensus solutions, and offer ‘data- or ‘fact-based’, ‘science based’ solutions, and solve ‘wicked problems’? (There are always winners and losers in those problems, all ‘solutions have costs as well as benefits, which inevitably will be unequally distributed.)

* Should ‘reminders’ or suggestions for coherent and thorough evaluation techniques be ‘posted’ repeatedly (just like systems mantras such as ‘understand the context’ or ‘look for relationship loops’, or ‘are we looking at the wrong question/problem?’

* Should examples of more thorough evaluation techniques (among others) be offered in a ‘tool kit’ for participants in planning projects to apply as necessary? No specific procedures can be recommended as mandatory ‘universal’ parts of planning approaches for all types of projects and situations.

* Should ‘templates’ of standard evaluation-related contributions (like template suggestions for planning arguments and their assessment) be offered at critical decision points?

* Should AI efforts be developed to assist evaluation in planning, to focus on such tasks as ‘translating’ verbal comments into templates for systematic evaluation, or the task of aggregating evaluation results into decision guides?

* Should aggregation tools that aim at ‘averages’ of evaluation results (prematurely aiming at ‘consensus’ outcomes) be accompanied by aggregation formats that reveal potential conflicts or differences of opinion? At a minimum, for example: Churchman’s ‘counter-planning’ suggestion based on radically different starting premises and goal statements (e.g. turning an aspect preference ranking list upside down…).

* Should shortcut steps such as (‘root cause’, system ‘boundary’ judgments be acknowledged as participant (modeler’s) judgments, not ‘reality-based constraints’?

* Should process provisions allow, even encourage, participants to change initial ‘default’ agreements as appropriate to insights obtained during the process? “The process design is part of the plan’ but collective ‘consensus’ pressures should be resisted?

* Is research about the reliability of evaluation-avoiding approaches needed: The possibility of approaches to lead participants to ignore aspects? Forgetting things? Bias towards theory-based criteria rather than project-related concerns? ‘Star designer’ decisions as responsibility-avoidance tactics?

* Should increased efforts be encouraged to improve transparent connections between evaluation results and decision? How should these provisions be addressed in more evolved systems models of planning?

Notes

[1] Rittel’s systems model of design: H.W. Rittel: Design methods lectures at U.C. Berkeley.

[2] Rational Planning Model: https://en.wikipedia.org/wiki/Rational_planning_model

[3] Theory of Change: https://en.wikipedia.org/wiki/Theory_of_change

[4] The distinction of ‘planning arguments’ (initially ‘design arguments’) was first introduced, to my knowledge, In my dissertation ‘The Evaluation of Design Arguments’ (U.C. Berkeley, 1977). See also: “The Structure and evaluation of Planning Arguments” T. Mann, in ‘INFORMAL LOGIC, Dec. 2010. Or “The Fog Island Argument” XLIBRIS 2009

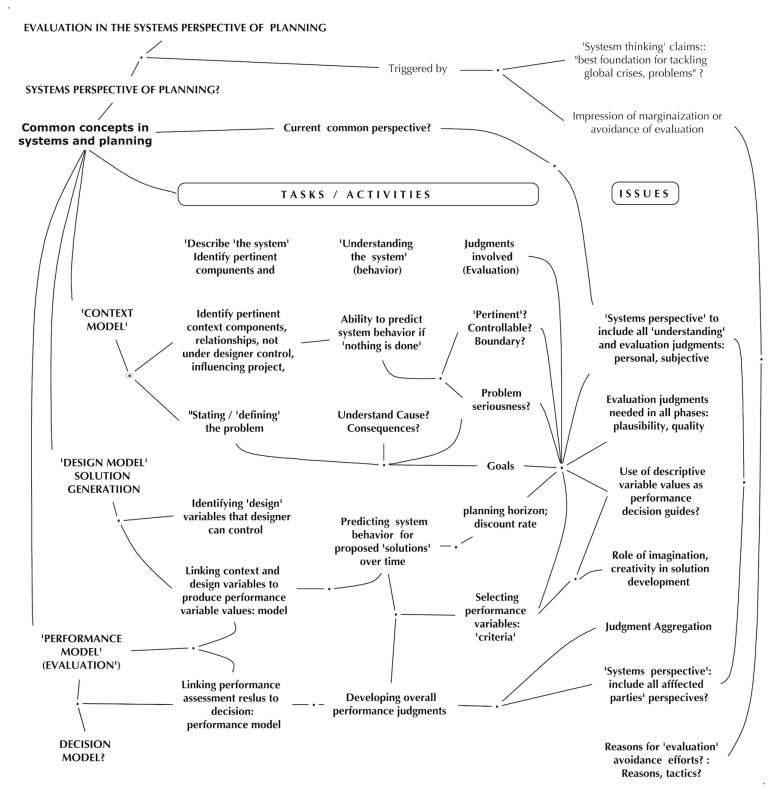

Figure 2 — Evaluation in a systems perspective of planning — overview

—

You must be logged in to post a comment.