Monthly Archives: May 2019

A Typology for the Systems Field | Rousseau, Wilby, Billingham, Blachfellner | Systema, 2016

A Typology for the Systems Field

Abstract

The field of systems is still a nascent academic discipline, with a high degree of fragmentation, no common perspective on the disciplinary structure of the systems domain, and many ambiguities in its use of the term “General Systems Theory”. In this paper we develop a generic model for the structure of a discipline (of any kind) and of disciplinary fields of all kinds, and use this to develop a Typology for the domain of systems.

We identify the domain of systems as a transdisciplinary field, and propose calling it “Systemology” and its unifying theory GST* (pronounced “G-S-T-star”). We propose names for other major components of the field, and present a tentative map of the systems field, highlighting key gaps and shortcomings. We argue that such a model of the systems field can be helpful for guiding the development of Systemology into a fully-fledged academic field, and for understanding the relationships between Systemology as a transdisciplinary field and the specialized disciplines with which it is engaged.

Keywords

Full Text: PDF![]()

Systema: connecting matter, life, culture and technology (ISSN: 2305-6991) is a peer-reviewed, open-access journal. All journal content, except where otherwise noted, is licensed under the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

Scientific discovery in a model-centric framework: Reproducibility, innovation, and epistemic diversity

seems rather important!

via complexity digest

original article: https://journals.plos.org/plosone/article?id=10.1371/journal.pone.0216125

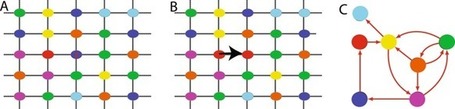

Consistent confirmations obtained independently of each other lend credibility to a scientific result. We refer to results satisfying this consistency as reproducible and assume that reproducibility is a desirable property of scientific discovery. Yet seemingly science also progresses despite irreproducible results, indicating that the relationship between reproducibility and other desirable properties of scientific discovery is not well understood. These properties include early discovery of truth, persistence on truth once it is discovered, and time spent on truth in a long-term scientific inquiry. We build a mathematical model of scientific discovery that presents a viable framework to study its desirable properties including reproducibility. In this framework, we assume that scientists adopt a model-centric approach to discover the true model generating data in a stochastic process of scientific discovery. We analyze the properties of this process using Markov chain theory, Monte Carlo methods, and agent-based modeling. We show that the scientific process…

View original post 108 more words

Can animals be usefully described as clockwork machines? | Aeon Essays

I started listening to the Econtalk podcast, I think, partly as a way to broaden my ‘echo chamber’ – the Hoover Institution doesn’t exactly have an intuitive appeal to me! And it turns out to be one of the best, insightful, and nuanced podcasts, partly because Russ Roberts manages a perfect blend of inquiry/curiosity and advocacy, being both well aware and explicit about his preferences and prejudices, and genuinely intellectually interested.

http://www.econtalk.org/

https://en.wikipedia.org/wiki/EconTalk

EconTalk is a weekly economics podcast hosted by Russ Roberts. Roberts, formerly an economics professor at George Mason University, is a research fellow at Stanford University‘s Hoover Institution.[1][2] On the podcast, Roberts typically interviews a single guest—often professional economists—on topics in economics. The podcast is hosted by the Library of Economics and Liberty, an online library sponsored by Liberty Fund. On EconTalk Roberts has interviewed more than a dozen Nobel Prize laureates including Nobel Prize in Economics recipients Ronald Coase, Milton Friedman, Gary Becker, and Joseph Stiglitz as well as Nobel Prize in Physics recipient Robert Laughlin.[3]

This episode with Jessica Riskin is a nuanced take on that unthinking trope of ‘the mechanical universe’, a great example of how polarisation obscures real thinking. It also name-checks cybernetics explicitly.

And here’s Jessica Riskin’s perspective on this in a nutshell:

Source: Can animals be usefully described as clockwork machines? | Aeon Essays

<excerpt only as it has a CAPITAL LETTERS thing saying ‘republishing not permitted’ – do go to Aeon – well worth reading!>

Alive and ticking

The idea that nature is a humming, complex, clockwork machine has been around for centuries. Is it due for a revival?

From Voyage to the South Pole and Oceania on the Corvettes Astrolabe and Zélée, during the years 1837-1840 by Jules Sébastien Cesar Dumont d’Urville. Photo by Getty Images

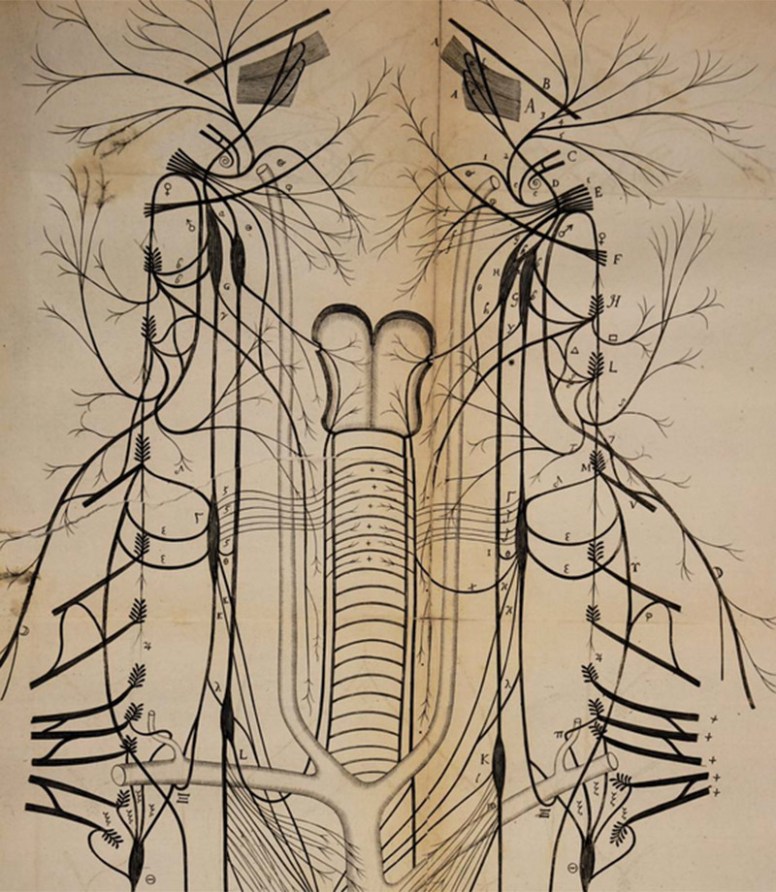

The philosopher René Descartes, who lived for a time near the royal gardens of St Germain-en-Laye just outside Paris, was intrigued by the strange machines installed there. The grounds of the château were abuzz with water-powered automata that cavorted in grottoes, enacting scenes from Greek mythology and playfully splashing their visitors. If these intricate hydraulic mechanisms could perform the defining actions of living things – moving themselves, engaging, interacting – why shouldn’t living things and even human beings be a kind of machinery? ‘I suppose the body to be nothing but a statue or machine made of earth,’ Descartes wrote in Treatise on Man (1633), where he invoked the ‘clocks, artificial fountains, mills, and other such machines which, although only man-made, have the power to move of their own accord’.

At the time, Europe was humming with mechanical vitality. On the grounds of palaces and wealthy estates, 16th- and 17th-century Europeans built theme parks featuring puckish androids that chased after or hid from guests, sprayed them with water, flour or ash, made faces and sang songs. In churches and cathedrals, automaton angels sang and prayed; horrible devils rolled their eyes and flailed their wings; the Holy Father made gestures of benediction; and mechanical Christs grimaced on the cross as Virgins ascended Heavenwards.

The model of nature as a complex, clockwork mechanism has been central to modern science ever since the 17th century. It continues to appear regularly throughout the sciences, from quantum mechanics to evolutionary biology. But for Descartes and his contemporaries, ‘mechanism’ did not signify the sort of inert, regular, predictable functioning that the word connotes today. Instead, it often suggested the very opposite: responsiveness, engagement, caprice. Yet over the course of the 17th century, the idea of machinery narrowed into something passive, without agency or force of its own life. The earlier notion of active, responsive mechanism largely gave way to a new, brute mechanism.

Brute mechanism first developed as part of the ‘argument from design’, in which theologians found evidence for the existence of God in the rational design of nature, and therefore began treating nature as an artefact…

Continues in source: Can animals be usefully described as clockwork machines? | Aeon Essays

Marshall McLuhan – extensions and amputations

Aidan Ward and Philip Hellyer are continuing their Gently Serious blog:

The latest from Aidan concerns ‘health’ and is highly worth reading. It led me, too, to suggest that we need to think about Marshall McLuhan in terms of systems thinking and particularly his concept ‘every extension is also an amputation’ – an adaptive point which perhaps simply echoes ‘take what you want, says the Lord – only pay the price’.

I couldn’t find better to explain this concept than the two cuttings below.

cheers

Benjamin

Source: Marshall McLuhan: “The Medium is the Message”

Technology as Extensions of the Human Body

In our continuing look at Marshal McLuhan, the man who coined the term “global village” and the phrase “the medium is the message,” we will reflect on what he had to say about the various ways human beings extend themselves, and how these extensions affect our relationships with one another. First, we must understand what McLuhan meant by the term “extension(s).”

An extension occurs when an individual or society makes or uses something in a way that extends the range of the human body and mind in a fashion that is new. The shovel we use for digging holes is a kind of extension of the hands and feet. The spade is similar to the cupped hand, only it is stronger, less likely to break, and capable of removing more dirt per scoop than the hand. A microscope, or telescope is a way of seeing that is an extension of the eye.

Considering more complicated extensions, one might think of the automobile as an extension of the feet. It allows man to travel places in the same manner as the feet, only faster and with less effort. In addition, this extension enables one to travel in relative comfort in extreme weather conditions. Most individuals already understand the concept of extension, but many are unreflective when it comes to what McLuhan calls “amputations;” the counterpart to extensions.

Every extension of mankind, especially technological extensions, have the effect of amputating or modifying some other extension. An example of an amputation would be the loss of archery skills with the development of gunpowder and firearms. The need to be accurate with the new technology of guns made the continued practice of archery obsolete. The extension of a technology like the automobile “amputates” the need for a highly developed walking culture, which in turn causes cities and countries to develop in different ways. The telephone extends the voice, but also amputates the art of penmanship gained through regular correspondence. These are a few examples, and almost everything we can think of is subject to similar observations.

McLuhan believed that mankind has always been fascinated and obsessed with these extensions, but too frequently we choose to ignore or minimize the amputations. For example, we praise the advantages of high speed personal travel made available by the automobile, but do not really want to be reminded of the pollution it causes. Additionally, we do not want to be made to think about the time we spend alone in our cars isolated from other humans, or the fact that the resulting amputations from automobiles have made us more obese and generally less healthy. We have become people who regularly praise all extensions, and minimize all amputations. McLuhan believed that we do so at our own peril.

The Dangers of Over-extended Technology

We have discussed the idea of extensions and amputations caused by new technology, which is introduced into society. The automobile was previously mentioned as an extension of the foot. The car allows one to travel, just as the foot does, only faster and with less effort. The amputations which result would include loss of muscle strength in the under-utilized legs, and the reduction in the quality of air we breathe.

Something occurs when a medium like the automobile, used for transportation, becomes over-extended. The resulting amputations such as muscle atrophy, smog, and high-speed fatalities increase at a rate that challenges the benefits initially gained. Automobile fatalities, lung disease, and obesity caused by modern transportation begin to outweigh the benefits of getting to our destinations quicker and with less effort. The final movement is the reversal of the benefits. McLuhan said:

Although it may be true to say that an American is a creature of four wheels, and to point out that American youth attributes much more importance to arriving at driver’s-license age than at voting age, it is also true that the car has become an article of dress without which we feel uncertain, unclad, and incomplete in the urban compound.{8}

To this observation might be added the fact that we train children from a very young age to stand within a few feet of high-speed vehicles without being afraid. Less than two hundred years ago a screaming locomotive or a high speed automobile would have caused a person to flee in terror for their lives. We have slowly conditioned ourselves to not be afraid of something that is in fact extremely dangerous. Similarly, we know that speed limits of twenty miles an hour would almost certainly eliminate most car fatalities, but we also consider the advantages of getting to our destinations quicker to be worth the resulting death rate. Proof of this casual acceptance of the disadvantages of the car could be imagined if one were to consider the fate of a political candidate who ran on a platform of reducing the national speed limit to twenty miles per hour. We know the advantages, even before implementation, but we choose to accept the disadvantages because there is a privileging of all types of technological extension, even deadly and horrific forms.

We are now prepared to consider the specific types of extensions realized by the television, mobile phone, and computer. If we take McLuhan’s lead then all of these must be simultaneously considered as extensions with both positive and negative amputations of previous technologies.

Source: Teaching McLuhan: Understanding Understanding Media | enculturation

Media as Extensions of Ourselves

The core of McLuhan’s theory, and the key idea to start with in explaining him, is his definition of media as extensions of ourselves. McLuhan writes: “It is the persistent theme of this book that all technologies are extensions of our physical and nervous systems to increase power and speed” (90) and, “Any extension, whether of skin, hand, or foot, affects the whole psychic and social complex. Some of the principle extensions, together with some of their psychic and social consequences, are studied in this book” (4). From the premise that media, or technologies (McLuhan’s approach makes “media” and “technology” more or less synonymous terms), are extensions of some physical, social, psychological, or intellectual function of humans, flows all of McLuhan’s subsequent ideas. Thus, the wheel extends our feet, the phone extends our voice, television extends our eyes and ears, the computer extends our brain, and electronic media, in general, extend our central nervous system.

In McLuhan’s theory language too is a medium or technology (although one that does not require any physical object outside of ourselves) because it is an extension, or outering, of our inner thoughts, ideas, and feelings—that is, an extension of inner consciousness. McLuhan sees the enormous implications of the development of language for humans when he writes: “It is the extension of man in speech that enables the intellect to detach itself from the vastly wider reality. Without language . . . human intelligence would have remained totally involved in the objects of its attention” (79). Thus, spoken language is the key development in the evolution of human consciousness and culture and the medium from which subsequent technological extensions have evolved.

But recent extensions via electronic technology elevate the process of technological extension to a new level of significance: “Whereas all previous technology (save speech, itself) had, in effect, extended some part of our bodies, electricity may be said to have outered the central nervous system itself, including the brain” (247). Thus, pre-electric extensions are explosions of physical scale outward, while electronic technology is an inward implosion toward shared consciousness, a change that has significant implications. McLuhan states: “Our new electric technology that extends our senses and nerves in a global embrace has large implications for the future of language” (80). This electronic extension of consciousness is one about which McLuhan himself seems conflicted, as when he writes:

Rapidly, we approach the final phase of the extension of man—the technological simulation of consciousness, when the creative process of knowing will be collectively and corporately extended to the whole of human society, much as we have already extended our senses and nerves by the various media. Whether the extension of consciousness, so long sought by advertisers for specific products, will be ‘a good thing’ is a question that admits of a wide solution. (3-4)

Thus, it is incorrect to categorize McLuhan as either a technophile or a technophobe, as his critics often try to do. McLuhan is more interested in exploring the implications of our technological extensions than in classifying them as inherently “good” or “bad.”

At times McLuhan speaks of a movement toward a global consciousness in positive terms, as when he writes: “might not our current translation of our entire lives into the spiritual form of information seem to make of the entire globe, and of the human family, a single consciousness?” (61). But at other times, he expresses reservations about this development: “With the arrival of electric technology, man extended, or set outside himself, a live model of the central nervous system itself. To the degree that this is so, it is a development that suggests a desperate and suicidal autoamputation . . .” (43). Thus, one of McLuhan’s key concerns in Understanding Media is to examine and make us aware of the implications of the evolution toward the extension of collective human consciousness facilitated by electronic media.

Scale-free networks revealed from finite-size scaling

Networks play a vital role in the development of predictive models of physical, biological, and social collective phenomena. A quite remarkable feature of many of these networks is that they are believed to be approximately scale free: the fraction of nodes with k incident links (the degree) follows a power law p(k)∝k−λ for sufficiently large degree k. The value of the exponent λ as well as deviations from power law scaling provide invaluable information on the mechanisms underlying the formation of the network such as small degree saturation, variations in the local fitness to compete for links, and high degree cutoffs owing to the finite size of the network. Indeed real networks are not infinitely large and the largest degree of any network cannot be larger than the number of nodes. Finite size scaling is a useful tool for analyzing deviations from power law behavior in the vicinity of a…

View original post 150 more words

Reconciling cooperation, biodiversity and stability in complex ecological communities

Empirical evidences show that ecosystems with high biodiversity can persist in time even in the presence of few types of resources and are more stable than low biodiverse communities. This evidence is contrasted by the conventional mathematical modeling, which predicts that the presence of many species and/or cooperative interactions are detrimental for ecological stability and persistence. Here we propose a modelling framework for population dynamics, which also include indirect cooperative interactions mediated by other species (e.g. habitat modification). We show that in the large system size limit, any number of species can coexist and stability increases as the number of species grows, if mediated cooperation is present, even in presence of exploitative or harmful interactions (e.g. antibiotics). Our theoretical approach thus shows that appropriate models of mediated cooperation naturally lead to a solution of the long-standing question about complexity-stability paradox and on how highly biodiverse communities can coexist.

Source: www.nature.com

Murray Gell-Mann passes away at 89

Murray Gell-Mann passes away at 89

Murray Gell-Mann passes away at 89

— Read on comdig.unam.mx/2019/05/24/murray-gell-mann-passes-away-at-89/

Closing the gaps- John Raven, 2017 (pdf) – and Bookchin’s Law

Closing the gaps

John Raven

This paper traces the origins of the educational system’s widespread inability (despite outstanding exceptions) to achieve its most widely agreed goal – i.e. to nurture and recognise the wide range of talents pupils possess. Among the reasons for this neglect is the absence of a shared framework for thinking about the nature, development, and assessment of high-level competencies. Unfortunately, more basic reasons include (i) the absence of a governance system which would facilitate experimentation, innovation, and learning, and (ii) a network of social forces which lead the system to concentrate on manufacturing and legitimising hierarchy in society. Evolving a new governance system and finding ways of harnessing the forces contributing to hierarchy thus become our top priorities.

Keywords: Systems roots of gross deficits in educational system; Nature, development, and assessment of competence; Requisite developments in governance; Hegemony of hierarchical thinking; Conceptualising, mapping, measuring, and harnessing social forces.

quote:

The second, which I will term ‘Bookchin’s law’ is an extension of Parkinson’s law – which asserts that work expands to fill the time allotted to it. Bookchin’s law, which I will discuss more fully later, asserts that, in a situation of surplus labour, a network of social processes result in the creation of huge amounts of hierarchically-organised work which delivers few benefits other than those delivered directly through participating in it

source: http://www.eyeonsociety.co.uk/resources/Closing-the%20gap-2017-short-E-CP-as-published.pdf

Forrester’s Law – The New York Times

A new social law that may rank alongside Parkinson’s Law on the pro liferation of bureaucracy is gaining ac ceptance in some technological quar ters, Called Forrester’s Law, this maxim holds that in complicated situa tions efforts to improve things often tend to make them worse, sometimes much worse, on occasion calamitous.

Inquiring systems and asking the right question | Mitroff and Linstone (1993) – Coevolving Innovations – David Ing

Inquiring systems and asking the right question | Mitroff and Linstone (1993)

Fit the people around an organization; or an organization around the people? Working backwards, say @MitroffCrisis + #HaroldLinstone, from current concrete choices to uncertain futures, surfaces strategic assumptions in a collective decision, better than starting with an abstract scorecard to rank candidates. The Unbounded Mind is an easier-reading follow-on to The Design of Inquiry Systems by C. West Churchman.

This scorecard metaphor shows up in the second of five ways of knowing (i.e. inquiring systems)

Chapter 3 is “The World as a Formula: The Second Way of Knowing”. A case study commonly used in business school education is described.

To illustrate the use and meaning of the Analytic-Deductive IS in a social realm, we’ll apply it to a situation that on the surface at least is as “simple” as the question that occupied us in the last chapter. There is a somewhat dated yet classic case in the Harvard Business Review that provides a perfect depiction of the Analytic-Deductive IS. [5] Four men are running for the presidency of a fictitious life insurance company, Zenith Life. Background information on their strengths and weaknesses, families, career history, skills, and so on, is given for all four, although we do not receive the same information for each of them. Thus, we know more about one candidate in one category than we do about another. Also, the history and current nature of Zenith Life itself, its prospects and problems, its opportunities as well as threats, are described. The central question of the case is, “Which of the four candidates is best qualified to head Zenith Life, given both its past history and its current condition?” [pp. 41-42]

- [5] Abraham T. Collier, “Decision at Zenith Life,” Harvard Business Review, January-February 1962, Vol. 40, No. 1, pp. 139-157

In all the years that we have given this seemingly “simple case” to scores of students and executives, the typical response has remained remarkably the same. Almost every student and executive — whether they worked individually on the case or in small groups — built a single, simple model that selects one and only one of the candidates as best for Zenith Life. The models are virtually the embodiment of Analytic-Deductive reasoning whether the students and executives were aware of this or not; in most cases, they were not.

The models essentially work as follows. A set of attributes that are characteristic of leadership is determined or specified: for instance, how charismatic each of the candidates is; their capacity to inspire others; the ability to formulate a vision of what Zenith Life needs to be in the coming decade; to present one’s ideas in a direct and persuasive manner so that others will want to join on; a clear sense of ethics and the ability to make decisions that are ethical and moral; their past job performance — job history, personality, and so on. Other variables such as”family support” were also included. Each candidate is then scaled on each attribute to the degree that the individual either embodies or possesses it. Typically, a score of “1” represents the absence of a particular attribute or poor performance on it, whereas “10” indicates the complete possession of an attribute or high performance. On more sophisticated models, the attributes are weighted differently so that, for example, the category “ethics” might be rated three times more important than one’s score in the area of “past job performance.” The “best candidate” to run Zenith Life is then selected on the basis of who has the highest score on all the attributes and their weightings. [p. 42]

So, the scorecard would look something like this:

| Attribute | Weighting | Candidate #1 | Candidate #2 | Candidate #3 | Candidate #4 | |

| Charisma | a % | ? / 10 | ? / 10 | ? / 10 | ? / 10 | |

| Capacity to inspire others | b % | ? / 10 | ? / 10 | ? / 10 | ? / 10 | |

| Ability to formulate vision for next decade | c % | ? / 10 | ? / 10 | ? / 10 | ? / 10 | |

| Presenting persuasively for followers | d % | ? / 10 | ? / 10 | ? / 10 | ? / 10 | |

| Ethics, moral decisions | e % | ? / 10 | ? / 10 | ? / 10 | ? / 10 | |

| Past job performance | f % | ? / 10 | ? / 10 | ? / 10 | ? / 10 | |

| —— | —— | —— | —— | |||

| Weighted total | ? | ? | ? | ? | ||

| Rank (of 4) | #? of 4 | #? of 4 | #? of 4 | #? of 4 |

By the analytic-deductive scorecard, the “objective” top-ranked candidate has the highest weighted score. However, this may not be the “best” way to select a candidate.

Almost never does an individual or a group build more than one model in order to demonstrate explicitly that, depending on the initial assumptions one makes, not only can one specify very different leadership attributes — and hence build very different models — but, as a result, one can select very different candidates as “best.” Even rarer is the individual or group — although this has occurred — who turns the whole case on its head by working backwards with the presumption that each of the candidates is “best,” but for a very different kind of company. That is, suppose one starts by assuming that each candidate is “best” and then asks the critical question, “What are the characteristics of the different kinds of companies for which each is ‘best’?” This approach thus creatively reverses the whole decision as one of specifying a new company to carry Zenith Life ahead in the coming decades. [pp. 42-43]

The criticism comes from Mitroff having been a coauthor of Strategic Assumption Surfacing and Testing for approaching ill-structured problems (much of which was developed alongside West Churchman’s doctoral supervision).

In essence, nearly everyone who reads the case and analyzes it assumes, almost without question, that it is a bounded, well-structured problem. Most people believe that the attributes or characteristics of leadership are obvious or self evident; much like a machine, that phenomenon of leadership can be decomposed or broken down into its constituent parts. In addition, not only do they assume that any individual’s leadership abilities can be scaled in terms of each of the separate components, but, further, that the weighted sum of scores on each component makes sense and is virtually the same as the whole phenomenon itself. To put it mildly, this is quite a body of assumptions.

While there is often much argument and heated debate between various individuals and groups over who has the single best or the right model, very few individuals or groups doubt that “out there, somewhere, the definitive book, expert, or mathematical model on leadership exists. In essence, the fundamental assumption is that critical human problems can be reduced to a formula, a cookbook mechanical procedure. The trick is just to find the right model and apply it correctly.

It’s all that simple — or is it really? Of course not. Indeed, it is often far easier to convince people that there are no simple models than to persuade them that there are. To see this, suppose we change the questions of this and the last chapter. [p. 43]

It’s possible that the preference for an “objective” answer is to reduce conflict. For greater creativity, perhaps more conflict is desired.

Instead of asking the seemingly neutral question that in turn seems to call for a factual response, “What are the expected tonnages in steel for the U.S. versus Japan in the year 2000?,” suppose we had asked instead a much more volatile question such as, “Suppose someone very dear and close to you and in their early teens had been raped brutally; whom would you appoint as a panel of experts to make the critical decision whether to grant an abortion or not?” Further, instead of asking for a model on something so prosaic as leadership as we did in this chapter, suppose that we had asked instead, “Build a model to make the decision whether to grant an abortion or not?” It comes as neither a great shock nor a surprise that these questions are treated very differently and evince very different responses. Now different assumptions become extremely vital. The discussion becomes even more heated between individuals and groups. The consensus over experts or models that may have flowed freely and easily before has all but evaporated. Everything has suddenly become contentious, as well it should. The problems or questions are no longer well structured. The very phrasings of the initial questions, which were not in dispute before and perhaps were even irrelevant, now become exceedingly critical. The ways in which the questions are posed and the assumptions made bear heavily on what counts as answers. The feelings aroused are so strong that they spill over to the supposedly more neutral and well structured issues, so that if we ask the question of forecasting steel production in the year 2000 and the selection of a president for Zenith Life after we have asked the more inflammatory questions, then these have become ill-structured issues as well. [pp. 43-44]

Way later, in the book, in Chapter 6 “Unbounded Systems Thinking: The Fifth Way of Knowing”, I noticed an exceptionally concise compression of the linkages from Edgar A. Singer (the doctoral supervisor of C. West Churchman) down to Ian Mitroff’s thinking.

In 1896, the great American philosopher William James of Harvard University wrote a letter to Provost Harrison of the University of Pennsylvania recommending Edgar Arthur Singer for a position in philosophy at his institution. James wrote that in his thirty years of teaching philosophy, Singer was the “best all around student” that he had had “in the philosophic business.” There was no aspect of philosophy that Singer could not do well.

Singer went on to a long and distinguished career in American philosophy. Among his many outstanding students was C. West Churchman from whom Mitroff studied philosophy of science at the University of California, Berkeley, in the 1960s.

The point of this all too brief bit of history is not just that the first author can trace his intellectual lineage back to one of the world’s most distinguished philosophers, and one whom both authors admire greatly, but that Singer was one of the most important participants in the founding of the modern systems approach. Churchman in turn extended Singer’s ideas significantly and their ideas form the philosophical basis for the modern systems approach. [p. 92]

With that, the Systems Approach is briefly described.

The upshot of Singer’s analysis was that there were no elementary or simple acts in any science or profession to which supposedly more complex situations could be reduced. Every act or action performed by humans was complex and therefore had within it a complex series of other actions. Furthermore, unlike the scientists and the philosophers of his day who believed that some sciences such as mathematics or physics were the most basic or fundamental, Singer believed that there were no fundamental sciences to which all others could be reduced. Since it was necessary at some point to involve every science in the actions of every other science, all the sciences and professions were equally fundamental. No single science stood at the top of the totem pole or hierarchy of science and in essence, every science depended on every other.

This fundamental notion of interconnectedness, or nonseparability, forms the basis of what has come to be known as the Systems Approach. In essence, the Systems Approach postulates that since every problem humans face is complicated, they must be perceived as such, that is, their complexity must be recognized, if they are to be managed properly. Notice the emphasis on the critical words “managed properly.” As a critical human activity, science, or the creation of a very special kind of knowledge, must be conceived of and managed as a whole system. [pp. 94-95]

The Unbounded Mind has been an easy-reading entry into the Systems Approach for many. It’s worth reading.

References

Churchman, C. West. 1971. The Design of Inquiring Systems: Basic Concepts of Systems and Organization. Basic Books. Alternate search at https://scholar.google.com/scholar?cluster=2520515633142315676 . Snippet view at https://books.google.com/books?id=ZGhQAAAAMAAJ

Mitroff, Ian I., and Harold A. Linstone. 1993. The Unbounded Mind: Breaking the Chains of Traditional Business Thinking. New York: Oxford University Press. Alternate search at https://scholar.google.com/scholar?cluster=12095226026166110830. Preview at https://books.google.com/books?id=NyV-BwAAQBAJ

Systemic Leadership & Change Network

new, intriguing, paid platform from Jennifer Campbell

Source: Welcome to Systemic Leadership & Change Network

Panarchy

Other links:

https://www.resalliance.org/panarchy

https://en.wikipedia.org/wiki/Panarchy

Main source: Panarchy

Panarchy

What is Panarchy?

Panarchy is a conceptual framework to account for the dual, and seemingly contradictory, characteristics of all complex systems – stability and change. It is the study of how economic growth and human development depend on ecosystems and institutions, and how they interact. It is an integrative framework, bringing together ecological, economic and social models of change and stability, to account for the complex interactions among both these different areas, and different scale levels (see Scale Levels).

Panarchy’s focus is on management of regional ecosystems, defined in terms of catchments, but it deals with the impact of lower, smaller, faster changing scale levels, as well as the larger, slower supra-regional and global levels. Its goal is to develop the simplest conceptual framework necessary to describe the twin dynamics of change and stability across both disciplines and scale levels.

The development of the panarchy framework evolved out of experiences where “expert” attempts to manage regional ecosystems often resulted in considerable degradation of those ecosystems (Gunderson and Holling, 2002). Regional management efforts are generally linear in nature, targeting the maintenance of certain variables – forest growth rates, river clarity, fish harvest rates, etc.

It was noted that focusing on managing a single variable, usually one of economic interest, generally resulted in other variables in the system changing, sometimes abruptly, and eventually degrading the entire ecosystem. It was also noted that the changes triggered by attempting to sustain a particular variable were changes that occurred so slowly (over decades or more), that they often went unnoticed until they in turn triggered an abrupt change (e.g. the forest became infested, the river became polluted, or the fish stock collapsed).

Basic Concepts in Panarchy

Ecosystem Characteristics

Empirical evidence of natural, disturbed and managed ecosystems identifies four key characteristics:

- Change is neither continuout and gradual, nor continuously chaotic. It is epicodic, regulated by interactions between fast and slow variables

- Different scale levels concentrate resources and potential in different ways, and non-linear processes reorganize resources across levels

- Ecosystems do not have a single equilibrium; multiple equilibria are common. Ecosystems have processes that maintain stability in terms of productivity and biogeochemical cycles; as well as processes that are destabilizing, which provide diversity, resilience and opportunity

- Management systems must take into account these dynamic features of ecosystems and be flexible, adaptive and experiment at scale levels compatible with the levels of critical ecosystem functions.

Stages of the Adaptive Cycle: Basic Ecosystem Dynamics

Panarchy identifies four basic stages of ecosystems, represented in the Figure below: exploitation, conservation, release and reorganization. All ecosystems, from the cellular to the global level, are said to go through these four stages of a dynamic adaptive cycle (see below).

- The exploitation stage is one of rapid expansion, as when a population finds a fertile niche in which to grow.

- The conservation stage is one in which slow accumulation and storage of energy and material is emphasized as when a population reaches carrying capacity and stabilizes for a time.

- The release occurs rapidly, as when a population declines due to a competitor, or changed conditions

- Reorganization can also occur rapidly, as when certain members of the population are selected for their ability to survive despite the competitor or changed conditions that triggered the release.

Adaptive Cycles

The four stages of the adaptive cycle described above (analogous to birth, growth and maturation, death and renewal), have three properties that determine the dynamic characteristics of each cycle:

- Potential sets the limits to what is possible – the number and kinds of future options available (e.g. high levels of biodiversity provide more future options than low levels)

- Connectedness determines the degree to which a system can control its own destiny through internal controls, as distinct from being influenced by external variables (e.g. temperature regulation in warm blooded animals, which involves five different physiological mechanisms, is an example of high connectedness)

- Resilience determines how vulnerable a system is to unexpected disturbances and surprises that can exceed or break that control (see below for more details).

The adaptive cycle is the process that accounts for both the stability and change in complex systems. It periodically generates variability and novelty, either internally such as through genetic mutations or adaptation, or by accumulating resources that change the internal dynamics of an ecosystem. These changes are the triggers for experimentation. In the reorganization stage various experiments are tested and resources are reorganized in new configurations, some of which enter a new exploitation stage to repeat the cycle.

Interconnectedness of Levels

Panarchy places great emphasis on the interconnectedness of levels, between the smallest and the largest, and the fastest and slowest. The large, slow cycles set the conditions for the smaller, faster cycles to operate. But the small, fast cycles can also have an impact on the larger, slower cycles. There are many possible points of interconnectedness between adjacent levels; however, two specific points are of particular interest with respect to sustainability:

- “Revolt” – this occurs when fast, small events overwhelm large, slow ones, as when a small fire in a forest spreads to the crowns of trees, then to another patch, and eventually the entire forest

- “Remember” – this occurs when the potential accumulated and stored in the larger, slow levels influences the reorganization. For example, after a forest fire the processes and resources accumulated at a larger level slow the leakage of nutrients, and options for renewal draw from the seed bank, physical structures and surrounding species that form a biotic legacy.

The fast levels invent, experiment and test; the slower levels stabilize and conserve accumulated memory of past, successful experiments. Sustainability in this framework is the capacity to create, test and maintain adaptive capability. Development becomes the process of creating, testing and maintaining opportunity.

Resilience

Resilience is the capacity of an ecosustem to tolerate disturbances without collapsing into a qualitatively different state. The greater the resilience is in a particular ecosystem the more it can resist large or prolonged disturbances. If resilience is low or weakened, then smaller or briefer disturbances can push the ecosystem into a different state, where its dynamics change.

According to this model, after a disturbance, ecosystems evolve through time as ecological niches fill in (increasing connectedness), biomass accumulates (increasing potential) and more successful species outcompete less successful species (decreasing resilience). This makes ecosystems vulnerable to exogamous shocks that they cause a release of resources and a period of rapid reorganization.

Once resilience is overwhelmed and an ecosystem enters a new state, restoration can be complex, expensive, and sometimes even impossible. Research suggests that to restore some systems to their previous state requires a return to environmental conditions well before the collapse.

Resilience can be degraded by a large variety of factors which largely depend on underlying, slowly changing variables such as climate, land use, nutrient stocks, human values and policies. Resilience is a characteristic of natural systems. When resilience is weakened it is sometimes possible to restore it. Diversity is believed to be a key issue in restoring resilience – both biological and social diversity are important to the extent they contribute functional redundancy (i.e. similar services can be provided by some element in the diversity). But as biological diversity is lost, or as human systems and institutions become homogenous and rigid, then the likelihood of restoring lost resilience declines.

The ability to anticipate and plan for the future is a unique characteristic of human systems, and has the potential to increase their resilience.

Strengths of Panarchy

Panarchy is a complex and controversial framework for describing ecosystem and human system dynamics and interactions, and it is beyond the scope of this overview to provide a thorough critique. Despite its broad sweep it does have the advantage of relative simplicity in terms of the basic concepts used to describe an array of complex phenomena. This framework developed over several years, is solidly based in empirical research across a broad range of ecosystems, and continues to develop conceptually and generate policy relevant research.

Panarchy is a sophisticated attempt to connect ecosystem functioning with economic activities and human institutions for managing the relation between the two. It is an evidence-based approach that forces us to think in non-linear terms about complex systems, while providing the conceptual tools to understand the complexities involved.

Limitations

Panarchy remains a hypothesis, despite the many empirical studies it has generated. It’s broad sweep requires more empirical testing. While it proports to be an integrative model of ecological, economic and social dynamics, it’s focus is primarily ecological. There are competiting attempts at integration,1 which may also account for the observed phenomena. There are also different ways of thinking about resilience (e.g. Fraser et al, in press). Despite these limitations, the panarchy framework continues to stimulate constructive debate and guide empirical studies.

Relation to Sustainable Scale

Many of the conclusions and observations made within the Panarchy framework are congruent with those regarding sustainable scale. There is recognition that:

- due to the inherent instability of ecosystems it is extremely difficult to detect or predict transitions to new ecosystem equilibria (e.g. when maximum scale might occur, see Considerable Uncertainty)

- sustainability is about retaining capabilities to continue contributing ecosystem services (i.e. natural income does not deplete natural capital, see Natural Capital and Income)

- resilience, the ability to resist disturbances, is a key characteristic of ecosystems (e.g. when throughput exceeds regeneration, resilience is reduced, see Sustainable Or Unsustainable)

- uncertainty is an inherent characteristic of the adaptive cycle and must be a key factor in any ecosystem management activity

- both uncertainty and risk increases with scale (i.e. problems at the global scale pose the greatest risks)

- precautionary policies are necessary to limit surprises (surprises increase as more natural income is used than is regenerated, see Wisdom in Precaustion in Visions For A Sustainable Future)

- the interaction of different time cycles is important, and that by the time efforts to keep fast variables within desired limits (e.g. GHG emissions) are recognized, it may be too late to avoid a major system change (e.g. climate stability) (see Climate Change)

- science uses uncertainty to drive inquiry, while vested interests use and foster uncertainty to maintain the status quo

- biodiversity is an important component of resilience, and is therefore important even if the types of biodiversity have no market value (see Biodiversity)

- new institutions are needed that gather better information on the slow variables, place greater emphasis on the future, maintain social flexibility for adaptive response, and which maintains and restores ecosystem resilience (see Institutions for a Sustainable Future)

- economic globalization contributes to simplification of ecosystems (as well as to their degradation), reducing resilience.

Panarchy is not a way of measuring sustainable scale. It does provide some interesting and challenging conceptual tools to assist in our understanding of how ecosystems and economic activities and institutions interact. It also identifies a variety of practical approaches to restore and conserve ecological sustainabilty.

References

Fraser, E., Figge, F., and W. Mabee. “A framework for assessing the vulnerability of food systems to future shocks,” Futures (2004).

Fraser, E. “Social vulnerability and ecological fragility: building bridges between social and natural sciences using the Irish potato famine as a case study,” Conservation Ecology, 7.2 (2003): 9.

1Fraser, E. “Social vulnerability and ecological fragility: building bridges between social and natural sciences using the Irish potato famine as a case study,” Conservation Ecology, 7.2 (2003): 9.

Gunderson, Lance and C. S. Holding. Panarchy: Understanding Transformations in Human and Natural Systems. Washington: Island Press, 2002.

“Resilience Alliance Home page.” Resilience Alliance. www.resalliance.org

| << Millenium Ecosystem Assessment << | >> The Natural Step >> |

| © 2003 Santa-Barbara Family Foundation |

Systemic design agendas in education and design research | November 2018, Nousala, Ing, and Jones

Systemic design agendas in education and design research

Sammendrag

Since 2014, an international collaborative of design leaders has been exploring ways in which methods can be augmented, transitioning from the heritage legacy focus on products and services towards a broad range of complex sociotechnical systems and contemporary societal problems issues. At the RSD4 Symposium (2015), DesignX co-founder Don Norman presented a keynote talk on the frontiers of design practice and necessity for advanced design education for highly complex sociotechnical problems. He identified the qualities of these systems as relevant to DesignX problems, and called for systemics, transdisciplinarity and the need for high-quality observations (or evidence) in these design problems. Initial directions found were proposed in the first DesignX workshop in October 2015, which were published in the design journal Shè Jì. In October 2016, another DesignX workshop was held at Tongji University in Shanghai, overlapping with the timing of the RSD5 Symposium where this workshop was convened. The timing of these events presented an opportunity to explore design education and research concepts, ideas and directions of thought that emerged from the multiple discussions and reflections through this experimental workshop. The aim of this paper is to report on the workshop as a continuing project in the DesignX discourse, to share reflections and recommendations from this working group.

6| Models and Processes of Systemic Design « Systemic Design

A very rich resource from the RSD7 conference last year.

Source: 6| Models and Processes of Systemic Design « Systemic Design

You must be logged in to post a comment.