This was tweeted with the even bolder claim:

I dunno, man… I feel I must be missing something, is this a scientific proof of something previously only intuitively known? Full paper at bottom.

source:

Entropy production gets a system update | Santa Fe Institute

Entropy production gets a system update

(Image: Pete LInforth/Pixabay)

NOVEMBER 18, 2020

Nature is not homogenous. Most of the universe is complex and composed of various subsystems — self-contained systems within a larger whole. Microscopic cells and their surroundings, for example, can be divided into many different subsystems: the ribosome, the cell wall, and the intracellular medium surrounding the cell.

The Second Law of Thermodynamics tells us that the average entropy of a closed system in contact with a heat bath — roughly speaking, its “disorder”— always increases over time. Puddles never refreeze back into the compact shape of an ice cube and eggs never unbreak themselves. But the Second Law doesn’t say anything about what happens if the closed system is instead composed of interacting subsystems.

New research by SFI Professor David Wolpert published in the New Journal of Physics considers how a set of interacting subsystems affects the second law for that system.

“Many systems can be viewed as though they were subsystems. So what? Why actually analyze them as such, rather than as just one overall monolithic system, which we already have the results for,” Wolpert asks rhetorically.

The reason, he says, is that if you consider something as many interacting subsystems, you arrive at a “stronger version of the second law,” which has a nonzero lower bound for entropy production that results from the way the subsystems are connected. In other words, systems made up of interacting subsystems have a higher floor for entropy production than a single, uniform system.

All entropy that is produced is heat that needs to be dissipated, and so is energy that needs to be consumed. So a better understanding of how subsystem networks affect entropy production could be very important for understanding the energetics of complex systems, such as cells or organisms or even machinery

Wolpert’s work builds off another of his recent papers which also investigated the thermodynamics of subsystems. In both cases, Wolpert uses graphical tools for describing interacting subsystems.

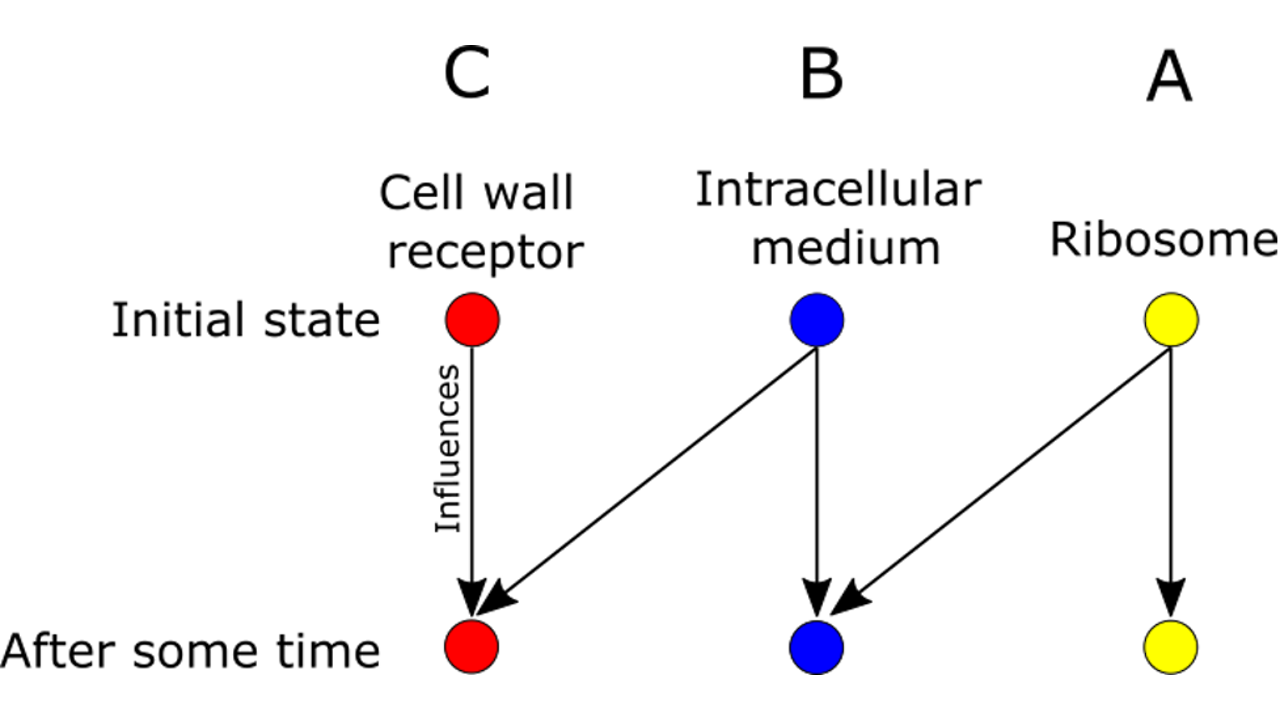

For example, the following figure shows the probabilistic connections between three subsystems — the ribosome, cell wall, and intracellular medium.

Like a little factory, the ribosome produces proteins that exit the cell and enter the intracellular medium. Receptors on the cell wall can detect proteins in the intracellular medium. The ribosome directly influences the intracellular medium but only indirectly influences the cell wall receptors. Somewhat more mathematically: A affects B and B affects C, but A doesn’t directly affect C.

Why would such a subsystem network have consequences for entropy production?

“Those restrictions — in and of themselves — result in a strengthened version of the second law where you know that the entropy has to be growing faster than would be the case without those restrictions,” Wolpert says.

A must use B as an intermediary, so it is restricted from acting directly on C. That restriction is what leads to a higher floor on entropy production.

Plenty of questions remain. The current result doesn’t consider the strength of the connections between A, B, and C — only whether they exist. Nor does it tell us what happens when new subsystems with certain dependencies are added to the network. To answer these and more, Wolpert is working with collaborators around the world to investigate subsystems and entropy production. “These results are only preliminary,” he says.

[By Daniel Garisto]

Read the paper, “Minimal entropy production rate of interacting systems,” in New Journal of Physics (November 2020)

source:

Entropy production gets a system update | Santa Fe Institute

PAPER • THE FOLLOWING ARTICLE ISOPEN ACCESS

Minimal entropy production rate of interacting systems

Published 13 November 2020 • © 2020 The Author(s). Published by IOP Publishing Ltd on behalf of the Institute of Physics and Deutsche Physikalische Gesellschaft

New Journal of Physics, Volume 22, November 2020DownloadArticle PDFDownloadArticle ePubFiguresReferences

Abstract

Many systems are composed of multiple, interacting subsystems, where the dynamics of each subsystem only depends on the states of a subset of the other subsystems, rather than on all of them. I analyze how such constraints on the dependencies of each subsystem’s dynamics affects the thermodynamics of the overall, composite system. Specifically, I derive a strictly nonzero lower bound on the minimal achievable entropy production rate of the overall system in terms of these constraints. The bound is based on constructing counterfactual rate matrices, in which some subsystems are held fixed while the others are allowed to evolve. This bound is related to the ‘learning rate’ of stationary bipartite systems, and more generally to the ‘information flow’ in bipartite systems. It can be viewed as a strengthened form of the second law, applicable whenever there are constraints on which subsystem within an overall system can directly affect which other subsystem.

https://iopscience.iop.org/article/10.1088/1367-2630/abc5c6