via [PDF] A Framework for Systemic Design | Semantic Scholar

Corpus ID: 56245403

via [PDF] A Framework for Systemic Design | Semantic Scholar

Corpus ID: 56245403

Lucy Suchman: https://en.wikipedia.org/wiki/Lucy_Suchman

Also: https://everything2.com/title/Simon%2527s+ant

https://www.gamasutra.com/blogs/LuisGuimaraes/20131204/205979/Simons_Ant_On_The_Beach.php

via Simon’s ant & system complexity – The Seemingly Unrelated

![]()

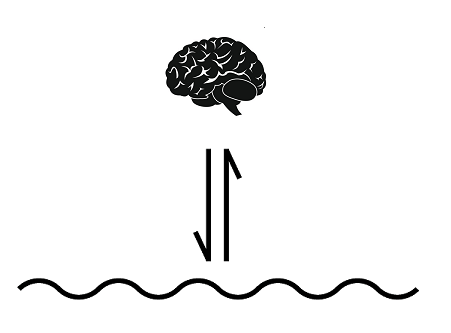

Where is the complexity? Just because a system’s environment is complex does not mean that the systems operating within it must be complex as well.

“An ant, viewed as a behaving system, is quite simple. The apparent complexity of its behavior over time is largely a reflection of the complexity of the environment in which it finds itself.” — Herbert Simon (Simon’s Law)

Simon’s Law is about an ant on a beach looking for food. If you were to graph the ant’s path it would look swervy and complex.

If you saw this line with no other context, you’d think to yourself: “some ant.” If however you had in your possession a corresponding picture of the beach, you would realize that there is nothing special about the ant at all.

In complex terrain even a simple robot can mimic the ant’s path with only a few simple rules. That robot could be programmed to change course when the strait path is blocked. There are Arduino kits designed for middle schoolers that do this.

Simon’s Ant reminds us to always take the environment into consideration when analyzing a problem. In other words: understand both the content and context of any problem. Most people believe that complex problems require equally complex solutions. But the inverse is often more accurate when context is considered.

Be wary of simple problems.

via Simon’s ant & system complexity – The Seemingly Unrelated

Harish's Notebook - My notes... Lean, Cybernetics, Quality & Data Science.

In today’s post, I am looking at the Free Energy Principle (FEP) by the British neuroscientist, Karl Friston. The FEP basically states that in order to resist the natural tendency to disorder, adaptive agents must minimize surprise. A good example to explain this is to say successful fish typically find themselves surrounded by water, and very atypically find themselves out of water, since being out of water for an extended time will lead to a breakdown of homoeostatic (autopoietic) relations.[1]

Here the free energy refers to an information-theoretic construct:

Because the distribution of ‘surprising’ events is in general unknown and unknowable, organisms must instead minimize a tractable proxy, which according to the FEP turns out to be ‘free energy’. Free energy in this context is an information-theoretic construct that (i) provides an upper bound on the extent to which sensory data is atypical (‘surprising’) and (ii) can be evaluated…

View original post 1,406 more words

via Boyd’s OODA ‘Loop,” Really Final Edition – Slightly East of New

The Norwegian Defense University has just published a new version of “Boyd’s OODA Loop” in their journal, Necesse, edited by Royal Norwegian Naval Academy. I had thought that the previous version was about as close to perfection as can be found on this Earth, but alas Necesse is a peer-reviewed journal, and “Reviewer No. 2” ripped it to shreds. After I calmed down, it was clear that Number 2 was right. So the edition published in the journal is vastly improved over the last version.

As Boyd suggested in his final briefing, The Essence of Winning and Losing (all of Boyd’s works are available for free download on our Articles page), the OODA “loop” is simply a schematic representing three processes and the interplay among them:

In fact, he even called his drawing of the OODA “loop” a “sketch,” strongly indicating that there might be better ways to represent these processes, and over time, people have suggested several.

The folks at Necesse have done a magnificent job of making this rather long and complex paper readable. Although I am sure there are many people involved whom I do not know — you have my sincere gratitude — I would like especially to thank two officers of the Royal Norwegian Navy whom I know quite well and am proud to call colleagues, Commanders Roar Espevik, Main Editor of Necesse, and Tommy Krabberød, who approached me with the idea of a new version of the paper and encouraged me to press on with a major revision as a result of certain peer review comments.

You can download the paper from the Articles page. The current edition of Necesse, which contains the paper, is available at https://fhs.brage.unit.no/fhs-xmlui/handle/11250/2647802, and past issues can be found at https://fhs.brage.unit.no/fhs-xmlui/handle/11250/2559117. It’s an interesting journal. There are quite a few articles in English, and, through the miracle of Google Translate, you should have no trouble with the others.

The origin of the name, incidentally, is found on the last page of the journal.

Oliver Wendell Holmes (OWH) was a Supreme Court justice who once said something so profound, that few people at the time, including himself, really understood the depth of the sentiment.

There are a few slightly different versions, quoted in various places, but I think this one best captures the sentiment. He also said a few other things, many notable, some stupid and several now unrepeatable. With regard to this quote, he is essentially saying that either side of the inherent ambiguity of complex situations, there are some calmer more understandable spaces, but and this is the crux, those other spaces are not the same. They are easily confused by the uninitiated, but they are fundamentally different to operate within, effectively.

We’re all familiar with the simple stuff “this side” as he calls it. There are some things that are enduring, easy to understand, buried in common knowledge, do…

View original post 2,915 more words

via Systemic Design: Live Discussion – YouTube

LIVE – 23 March, 18:00 UK time

I include this as an example of the kind of approach to ‘systems thinking’ which we are thankfully seeing less of these days. I have no doubt these are good, wise, well-informed people teaching valuable stuff – and you always have to account for how an article is written. But the two examples of systems thinking tools given here are RACI and the iceberg model. In terms of really getting at what systems thinking is, I would almost characterise this piece as ‘not even wrong’.

via “Gradually, then suddenly” — 4 ways to think about Coronavirus | GovInsider

Exponential growth explained.

The current situation around the coronavirus epidemic is evolving rapidly. In some of my social circles the most pressing worry is, however, not centered on what we ought to be doing to get this under control. For many people the overriding concern is seemingly not to be seen as worried or, God forbid, as “panicking”.

From what I can tell this doesn’t derive from a sober analysis of the facts but from some sort of magical thinking which says that bad things haven’t happened here in a long time and therefore surely this cannot be bad.

Mental models, like the one just mentioned above, are the things which help us think about the world. That’s why, in situations like these, it may be helpful to examine and challenge our mental models.

So here are four basic concepts (taken from complex systems thinking) which can help us make sense of what is going on. They also help explain why epidemiologists and public health experts are as alarmed as they are.

Exponential growth: “Yesterday everything was still under control?!”

Italy went from one diagnosed case to well over 3‘000 in just over a month (34 days) with only three cases in the first 21 days. It’s safe to say that most intuitively did not expect the number of diagnosed cases to grow so quickly.

We are all familiar with the story of the inventor who asked to be modestly compensated by his emperor by receiving one grain of rice on the first square of the chessboard, two on the second square, four on the third square, and so on until all squares are filled. About halfway into the chessboard the emperor realizes that the request wasn’t as modest.

The point is that exponential growth keeps surprising us. And for some reason we don‘t seem to have a good intuitive handle on it.

Ray Kurzweil coined the expression of the “second half of the chessboard”. In the first half the effects are large but potentially manageable. In the second half things spiral out of control.

“Everything is fine” -> “It’s growing but we got this under control” -> “Oh…”

What does that mean?

A small number of cases COVID-19 cases combined with an exponential growth rate may not, as we might assume, be a small problem. Absent an effective intervention which prevents further exponential growth a small number of cases may already spell deep trouble. Better avoid getting into the ‘second half of the chessboard’ (see also this thread by epidemiologist Marcel Salathé on this topic).

Of course the exponential growth of coronavirus infections cannot literally go on forever because there is only a finite number of humans. It is also true that diseases do not usually spread at an exponential rate for the entire duration of an epidemic.

The point is, however, that we will tend to underestimate the danger that an initial small number of cases poses because we struggle to imagine how quickly that small number can turn into a very large one.

The current doubling time (as of March 7, excluding China) is about four days. In other words, every four days the worldwide number of known coronavirus cases doubles. In January, before the spread of the infection in China slowed, it was less than two days. This translates into a tenfold increase in a week.

Such exponential growth is why intervening early and heavily may well be justified. The logic with all undesirable things with exponential growth rates is the same: you want to nip it in the bud early. Early action may well be orders of magnitude cheaper and easier compared to reacting later.

Phase changes: Everything is fine until it suddenly isn’t

Complex systems tend to have ‘tipping points’ and go through ‘phase changes’ once they reach one of those. This means that once a system reaches a certain threshold things can change rapidly.

Take the provision of healthcare in hospitals as an example. An epidemic starts and hospital beds start filling up. Everything is basically fine. We can provide adequate medical care to everyone who shows up and needs it. No need to overreact or introduce costly containment measures, right?

Maybe, except that at one point we will hit a ‘tipping point’ and run out of hospital beds (or ventilators, or masks, or any other finite resource).

Once that happens, things shift suddenly.

Survival rates might go down because we cannot provide care anymore to the same standard, infection rates among healthcare staff go up because there aren‘t enough masks to go around, etc.

Imagine a bathtub that keeps filling up with water. Water flows in at a constant rate. The tub slowly fills. Everything is completely fine. Until at one point the tub starts to overflow. The fact that the tub hasn’t overflowed yet doesn’t tell us that we shouldn’t worry about it overflowing.

The same logic also holds for supply chains. Many firms will have some amount of material in stock.

The fact that they are still able to function for a period of time in the absence of continued deliveries from their suppliers tells us very little about how resilient the system really is.

Everything can seem to continue just fine for a while until it suddenly starts to fall apart because one or more critical components are simply not available anymore. For the most part we should be able to know in advance where things will fall apart — though that doesn’t necessarily mean that we can do anything about it.

Delayed feedback cycles: “why are things still getting worse even though we introduced all these measures?”

Imagine how hard it would be to drive a car where there was a lag of just a few seconds between you turning the steering wheel and the car doing what you want it to do. It would be phenomenally annoying.

(This is also why cooks prefer gas stoves over electric ones: an electric stove has a built-in delay between me turning the knob up or down and the temperature changing. With gas stoves there is no delay, the temperature rises or falls immediately.)

This is the situation we are finding ourselves in, both in terms of detection of the disease and in terms of understanding the effects of measures taken in response to the epidemic.

The cases that are being diagnosed today are people who got infected two weeks ago or so. The current count of diagnosed cases captures a past reality, not the present situation. It‘s like looking at a star at night — because light takes time to travel to us you’re looking at the past.

The delayed feedback loops hold for our understanding of whether the measures that are being taken are effective. Italy introduced country-wide school closures on March 04. Whether these will curb the number of new infections will be known only a week or more after that (and quite possibly even later).

This makes decision-making very difficult for governments. One implication is that any restrictions that are put in place will likely be lifted slowly and gradually rather than all at once in order to avoid wild swings.

Another implication, particularly when you put this together with exponential growth, is that you need to act early because the results of the intervention will only show with a delay.

The actions we take today have to match the magnitude of the problem in a week’s time (or so). Otherwise we will be playing catch-up forever – and that is a losing proposition. What might look like an overreaction is, in fact, more likely to be perfectly proportionate. Put differently and to a rough first approximation: unless it looks like an overreaction it may not be sufficient.

Leverage points: (relatively) small change, (relatively) large effect

Another feature of complex systems is that there usually exist leverage points, interventions which can create an outsized effect because of their position and influence in the system.

This cuts both ways, of course.

If you wanted to accelerate the spread of SARS-CoV-2 quickly and cheaply you’d be well advised to encourage mass gatherings.

If, on the other hand, you want to do the opposite you’d encourage ‘social distancing’, increased hand washing as well as other personal hygiene measures. Because they are — hopefully — ‘leverage points’ these may well make a much bigger difference than we might intuitively believe.

Other likely leverage points include travel restrictions and the early isolation of suspected cases. Travel restrictions prevent geographic spread and allow the response to focus on a specific area. The early isolation of suspected cases prevents those individuals from infecting others. The effects over time are large, even if not all of the suspected cases in isolation have coronavirus.

“Death of Achilles” by Rubens — Paris found a leverage point and… you know how the story ends

If these four concepts happen to apply in this situation then we’re well advised to act swiftly because that may well save us from a lot of pain.

I offer these thoughts in the spirit of Donella Meadows — a pioneer of systems thinking — who said: “Remember, always, that everything you know, and everything everyone knows, is only a model. Get your model out there where it can be viewed. Invite others to challenge your assumptions and add their own.”

Further reading:

I owe a lot of thanks to all the individuals who took the time to read and comment on initial drafts. I asked people with expertise in epidemiology and other related fields to look over it in an attempt to avoid obvious mistakes. Special thanks to Yaneer Bar-Yam for his comments on the first version of this essay. Any remaining errors are, obviously, mine.

This article is written by Danny Buerkli (@dannybuerkli). He is a co-founder at staatslabor, a government reform lab based in Switzerland, and has an interest in emerging public administration paradigms and self-organisation. Previously he was a director at the Centre for Public Impact, a not-for-profit foundation that works to reimagine government. This essay was originally published here in English on 8 March 2020.

via “Gradually, then suddenly” — 4 ways to think about Coronavirus | GovInsider

|

|||||||||||

|

They also say:

The Benefits (Yes, Benefits) of Fear, Conflict, Failure, and Resistance

April 29, 2020, 11:00 am – 12:30 pm PST

Discover our Past Webinars:

Designing a Powerful Shared Intent (February, 2020)

Download the slide deck we used in this session.

Get the Four Agendas for Collaborative Innovation and a blank Intent Map.

Four Agendas for Leading Multistakeholder Collaboration (January, 2020)

Hosted by Tamarack Institute

Download the slide deck we used in this session.

Get the Four Agendas for Collaborative Innovation.

Leveraging the 5 Critical Design Tensions in Collaborative Innovation (November, 2019)

Download the slide deck we used during this session.

Get a copy of the polarity mapping exercise we completed during the webinar.

Learn more about Cliff Kayser and Polarity Partnerships.

Collaborative Innovation: What It Is, How It’s Different, and Why It Works (August, 2019)

source: https://www.wearecocreative.com/webinars

You must be logged in to post a comment.