Source: Cybernetic Serendipity: History and Lasting Legacy

Cybernetic Serendipity: History and Lasting Legacy

Catherine Mason considers the ICA’s groundbreaking computer art exhibition of 1968 and looks at how it has shaped digital art in the 50 years since

by CATHERINE MASON

The impact of the pioneering exhibition Cybernetic Serendipity at London’s Institute of Contemporary Arts in 1968 should not be underestimated. It is still considered to be the benchmark computer art exhibition for its influence on many pioneers, as well as for introducing the subject to a wider audience. Fifty years on, the historical relevance of this groundbreaking show continues in our digitally driven world in almost everything we visually (and even aurally) consume – from CGI and special effects seen in Hollywood blockbusters to design encountered in our everyday lives and, of course, in fine arts. There can be few artists working in the 21st century who are not touched by some aspect of digital presence in their work.

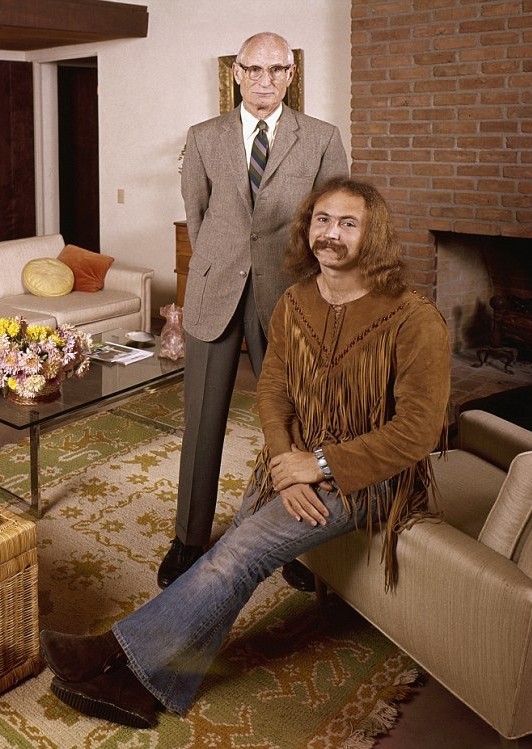

James Faure Walker. View, 2016. Archival inkjet print, 58 x 66 cm.

Cybernetic Serendipity (CS) was the first comprehensive international exhibition in Britain devoted to exploring the relationship between new computing technology and the arts. There had been exhibitions of machines before, but CS was the first gallery show of its type. Uniquely in the UK in a gallery setting, it featured collaborations between artists and scientists, and showed these to be on an equal footing. The breaking down of barriers between disciplines was an important factor. Machines were shown alongside artworks and no differentiation was made between object, process, material or method, nor between the backgrounds of makers, whether art-school educated or not. One of the aims of CS was to show the scope of what was possible, emphasising the optimistic and celebratory nature of the project. Although its subject matter was avant garde, presenting a topic and style of artwork that was outside the mainstream of British art at this time, CS was facilitated and inspired by a postwar spirit of optimism in the positive power of new technologies.

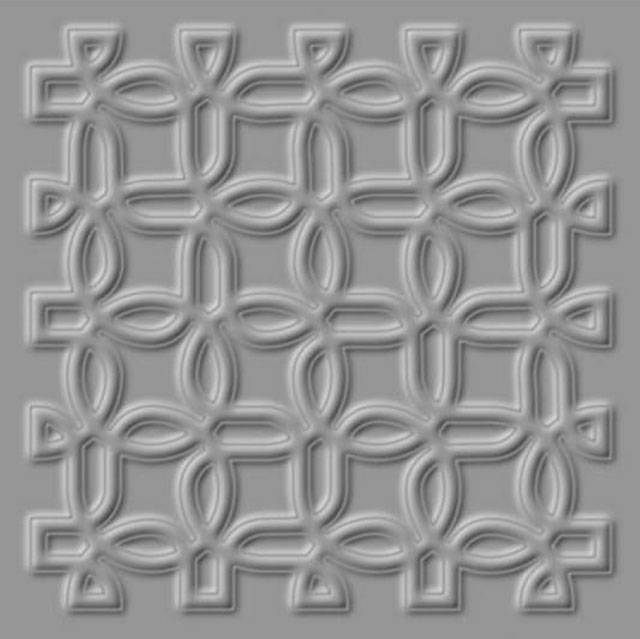

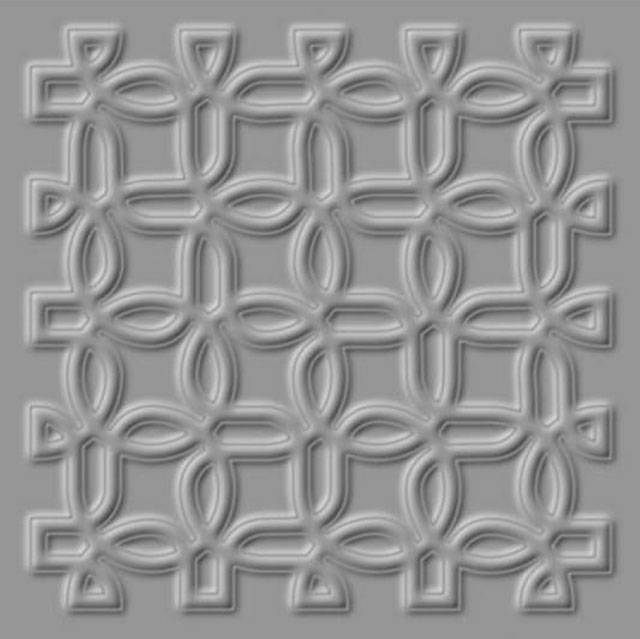

Paul Brown. Ceiling Detail from the House of Signs, 1996. Giclée print, 19.68 x 19.69 in. Included in Creativity and Collaboration: Revisiting Cybernetic Serendipity, National Academy of Sciences, Washington DC, 2018.

The curator Jasia Reichardt, armed with a letter of introduction to IBM USA, was able to access important artists and corporate giants such as Boeing and General Motors.

The exhibition was opened by the then minister of technology, Tony Benn. The Ministry of Technology was set up under Prime Minister Harold Wilson’s “white heat of technology” government, to promote industrial efficiency and the use of new technology in industry. At the 1963 Labour party conference, Wilson set out Labour’s plan for science, promising a Britain “forged in the white heat of this revolution” with “no place for restrictive practices of or outdated methods”. Science and technology was seen as the engine of progress, a driving force for industrial innovation and economic prosperity. It was the Ministry of Technology that forced the series of mergers that created ICL – Britain’s biggest computer manufacturer, as competition for the US’s IBM.

The burgeoning subject of computer arts or cyberart was thus introduced to a younger generation of artists in a positive social and political climate. This generation subsequently laid the foundation for decades of advancement in the arenas of digital image-making, animation, interactivity, intermedia and cross-disciplinary collaboration in the arts, which is a feature of much contemporary art today.

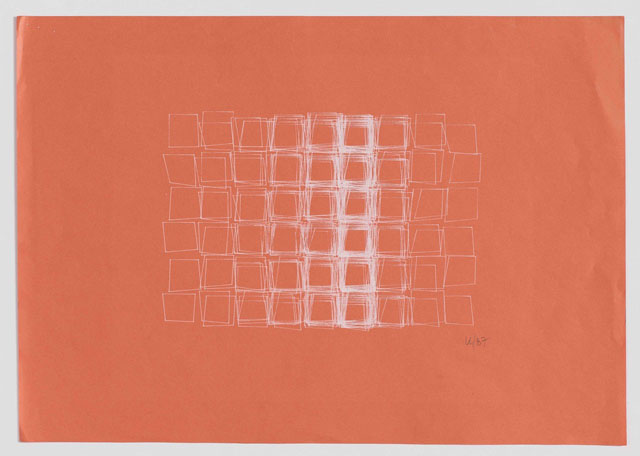

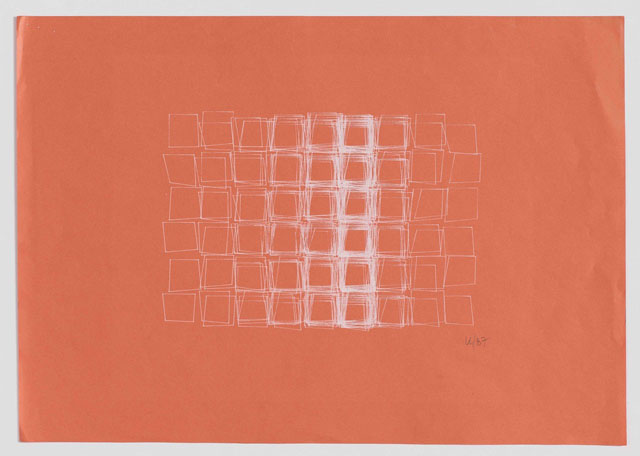

Vera Molnár. Structure de Quadrilatères (Square Structures), 1987. Computer drawing with white ink on salmon-coloured paper, 11 3/4 x 16 1/2 in. Courtesy Senior & Shopmaker Gallery, New York.

The complexity and rarity of computers during this period meant that any artform based around them was bound to be a specialised branch of art, highly dependent on support and funding. This was not least because of the expensive, large-scale nature of much early equipment and the resulting technical expertise required to operate it. Before the onset of user-friendly systems, proprietary software and personal computers, artists had to build relationships with scientists, learn to write code and often construct their own hardware.

Frieder Nake. Bundles of straight lines, 1965. © the artist.

As a result of these issues of access, artists and those from a technical or scientific background created work during this pioneering period. Much work was experimental in nature and, as such, often ephemeral. Sadly, some work survives today only in descriptions or photographs. Equipment and components that were expensive and hard to come by, could, and regularly were, repurposed and recycled in subsequent projects and, today, the original physical object may no longer exist.

In this short article, there is space to mention only a small number of the artists involved and highlight some of the notable events; the intention here is to give an introduction to this subject and, I hope, a flavour of the wealth of artistic activity that took place during this creatively rich period.

Computer art is a broad label used here as an historical term to describe work made with or through the agency of a digital computer predominately as a tool, but also as a material, method or concept, from around the late-1950s onward. As Reichardt wrote in her introduction to Studio International’s accompanying publication, the exhibition showed “… artists’ involvement with science, and the scientists’ involvement with the arts [and] the links between the random systems employed by artists, composers and poets, and those involved with the making and the use of cybernetic devices.” A further term, cyberart, has been employed to describe artworks that have been created with, or enhanced by, the use of science and technology.

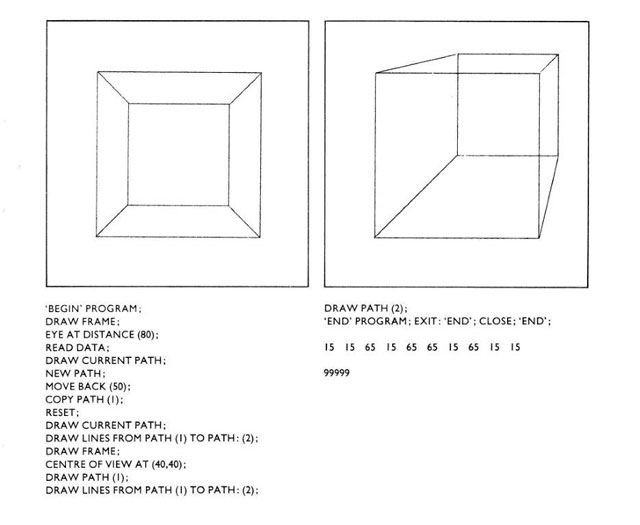

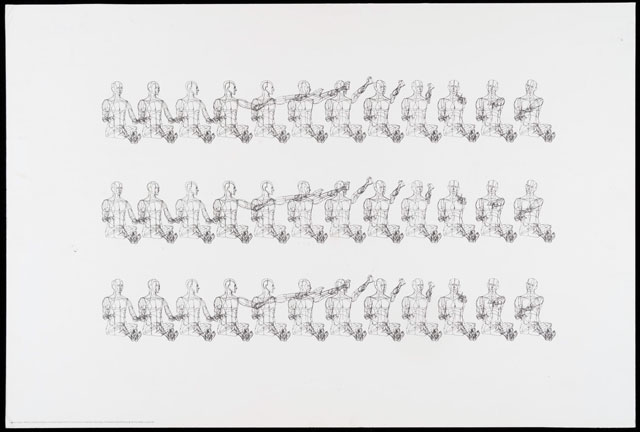

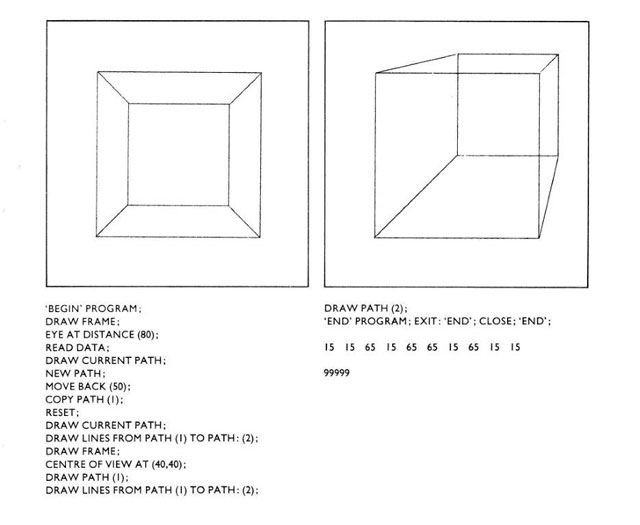

Example of NEW PICTURES computer graphics language for artists at Lanchester Polytechnic, Coventry School of Art by R Johnson, 1979.

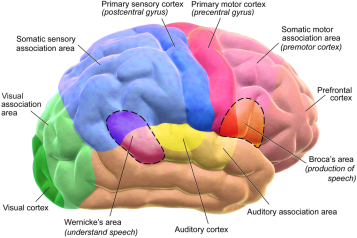

Interest in using new technologies to make art was initially informed by the science of cybernetics.

At this point, computers were at an early stage in their development, commonly thought of as “number crunchers” and often referred to as “electronic brains”. Not only was it difficult to access this equipment, at this stage it was difficult to perceive of the computer as being an art method or material, let alone one with capacity for interactivity.

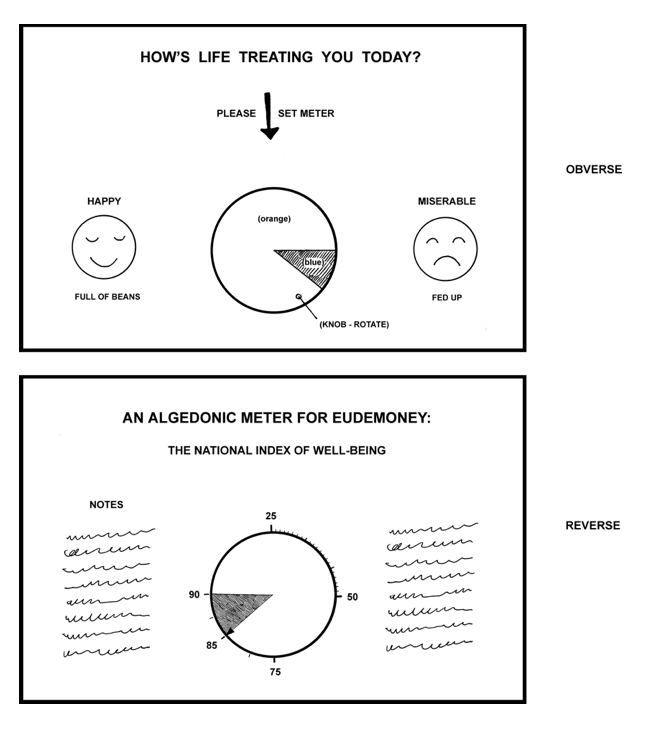

Cybernetics comes from the Greek root kubernetes, meaning pilot or helmsman, and was first used by Plato in his dialogues on Laws and The Republic to denote a governor of a country. In the 1940s, cybernetics was given its current meaning by Norbert Wiener in his 1948 book Cybernetics, Or Control and Communication in the Animal and the Machine. According to Weiner, at a basic level, cybernetics refers to “the set of problems centred about communication, control and statistical mechanics, whether in the machine or in living tissue”. Wiener’s concept was that the behaviour of all organisms, machines and other physical systems is controlled by their communication structures, both within themselves and with their environment. The result of this book was that the notion of feedback penetrated almost every aspect of technical culture. Early influential cyberneticians working in Britain include W Ross Ashby, Stafford Beer, W Grey Walter, Frank H George and Gordon Pask. Pask’s interactive cybernetic work Colloquy of Mobiles was exhibited at CS. This large-scale reactive and educable sculptural installation is now seen as a precursor to human-machine interaction. Cybernetics, the study of how machine, social and biological systems behave, offered a means of constructing a framework for art production in which artists could consider new technologies and their impact on life.

Before CS, the Independent Group, the younger members of the Institute of Contemporary Art, were meeting in the early 50s. This group of visual artists, architects, theorists and critics included Richard Hamilton, Reyner Banham, Eduardo Paolozzi, Peter and Alison Smithson and Theo Crosby. Inspired by Scientific American, Wiener’s writings, Claude Shannon’s information theory, John von Neumann’s game theory and D’Arcy Wentworth Thompson’s book On Growth and Form, they became interested in the implications of science, new technology and the mass media for art and society. Of particular influence on the incubation of cyberart was the 1956 London exhibition This is Tomorrow, a model of collaborative art practice. The catalogue of this show contains the first British published reference to the possible use of computers in art. The artists write of “punched tape … cards” and “motor and input instructions” as being potential tools and methods for art production.1

Roy Ascott. Change Painting, c1960s. © the artist.

Roy Ascott, a student of Hamilton’s, continued the interest in communications systems and cybernetics in the early 60s by incorporating into his work as models the concepts of behaviour and process, stressing media dexterity, interdependence, cooperation and adaptability. For Ascott, art is a system that involves feedback between creator and audience.2 Ascott became an influential educator and contributed greatly to the consideration of systematic and programmatic ways of teaching in art schools; ultimately, this led to the use of computers by students in British art schools.

CyberArt in Europe arose from the kinetic art movement, which derived from the European avant garde, seen for example with László Moholy-Nagy and Naum Gabo in Paris from the 20s. Both had a strong identification with science and technology alongside an interest in constructivism. By the 50s, a number of sculptors in Europe were already advanced in the development of art-making according to kinetic and cybernetic principles. The Hungarian-born Frenchman Nicolas Schöffer was pioneering interactive sound-equipped and cybernetic works from the early 50s, collaborating with engineers from Philips Electronics of the Netherlands. Also from this time, the Swiss sculptor Jean Tinguely used found objects and recycled machine parts to create kinetic works in Paris. Tinguely’s ideas spread when he travelled to the ICA in 1959 and to New York in 1960, working with engineer Billy Klüver at the Museum of Modern Art. Both Tinguely and Schöffer featured in CS.

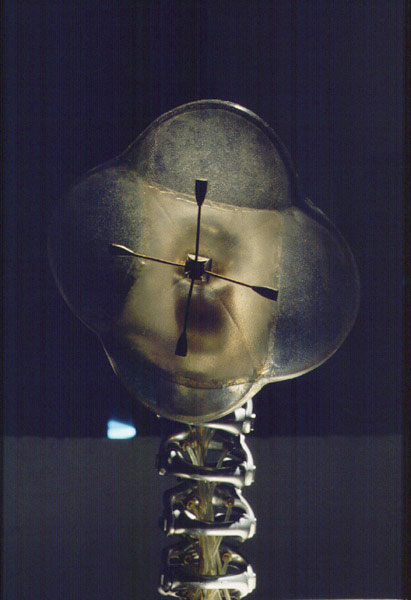

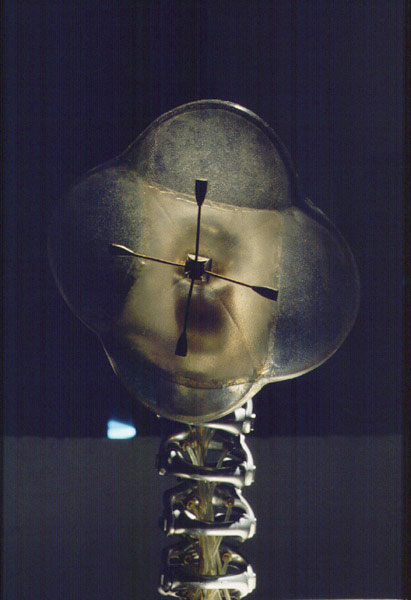

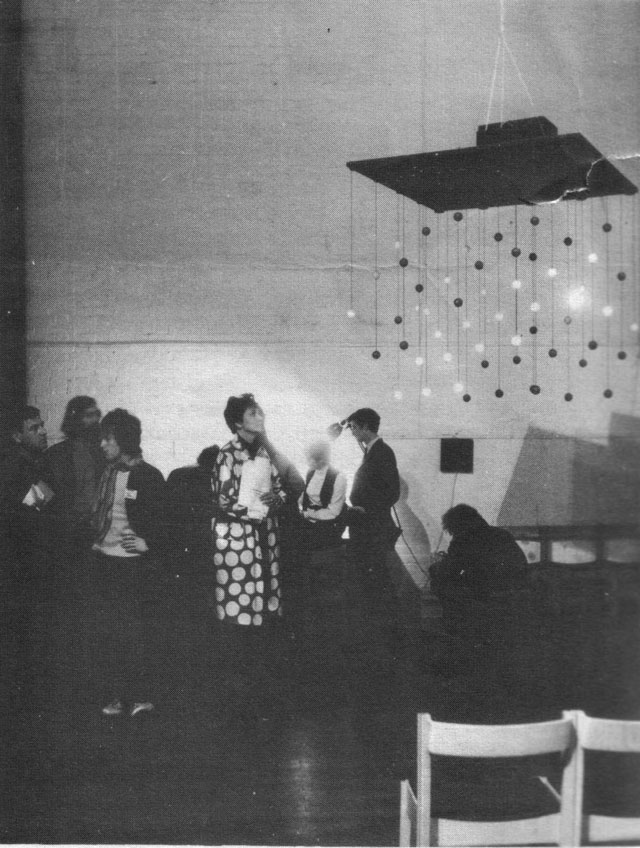

Edward Ihnatowicz. SAM, installation view at Cybernetic Serendipity 1968. © estate of the artist.

In England, the Polish émigré Edward Ihnatowicz took the crucial component of Kinetic art – the aspect of spectator participation – and combined it with the cybernetic principle of feedback. At CS, he exhibited SAM, Sound Activated Mobile, which was interactive, moving in a very lifelike manner in response to the sounds visitors made. Judging from surviving CS film footage, it appeared to be a huge hit with audiences.

Some of the first exhibitions of plotter drawings made by computer took place in Stuttgart in 1965, organised by the German philosopher Max Bense. It was Bense who encouraged Reichardt to consider this subject as a potential exhibition material at CS. The Stuttgart show included the major German pioneers Georg Nees and Frieder Nake, pioneers of algorithmic art – writing programs (sets of predetermined instructions) to automatically generate drawings via a plotter. Both were inspired by Bense’s aesthetics, based on Charles S Peirce’s semiotics and Shannon’s information theory.

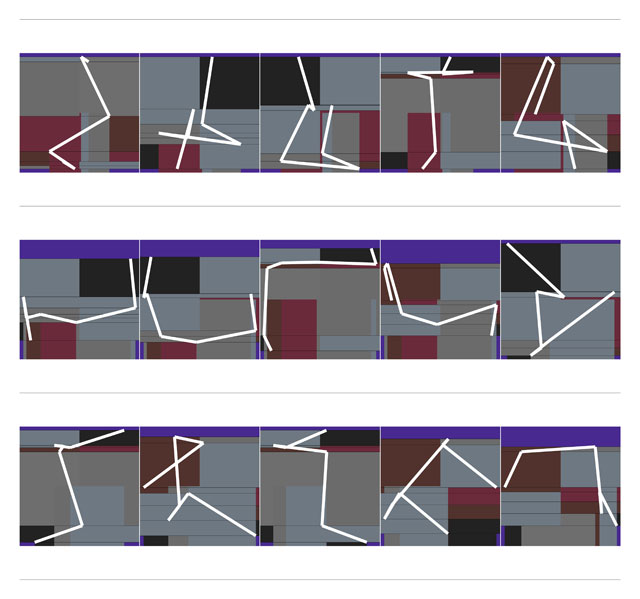

Manfred Mohr. P1650_1186, 2014. Pigment-ink on paper, 40 x 50 cm. © the artist.

Also working with algorithms at this time was Manfred Mohr, in Paris. Drawing via computer allows the possibility of producing sequences through iteration, the repetition of sets of instructions that can be adjusted so that each version is slightly different. It also enables exploration of calculations that would be mentally impossible, for example Mohr’s investigation of the mathematical permeation of aspects (sub-structures) of the cube and hyper-cube, a feature of his work for many years.

In Zagreb, in the former Yugoslavia, the New Tendencies art movement emerged in the early 60s, initially dedicated to concrete and constructivist art, op and kinetic art. They held several exhibitions before, in 1968 (under the title Tendencies 4) shifting their focus to “computers and visual research”, which launched a symposium, exhibition, competition and the groundbreaking multilingual magazine Bit International. Including computer-generated graphics, film and sculpture, Tendencies transformed Zagreb – already one of the most vibrant artistic centres in Yugoslavia – into an international meeting place where artists, engineers, and scientists from both sides of the iron curtain gathered around the then-new technology.3

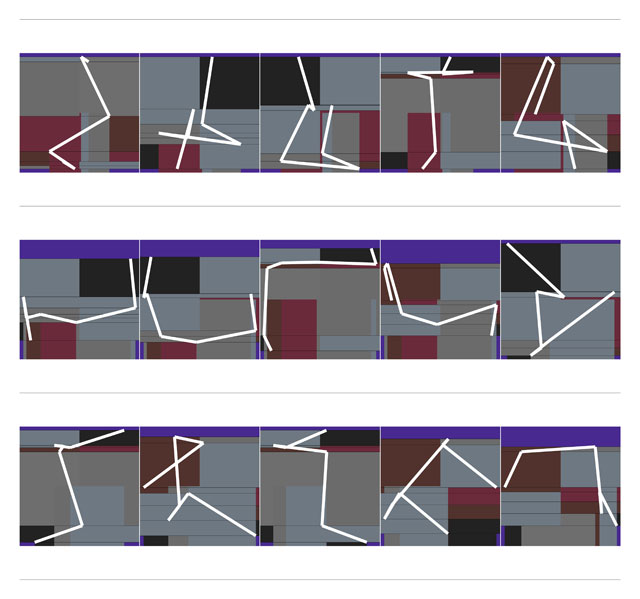

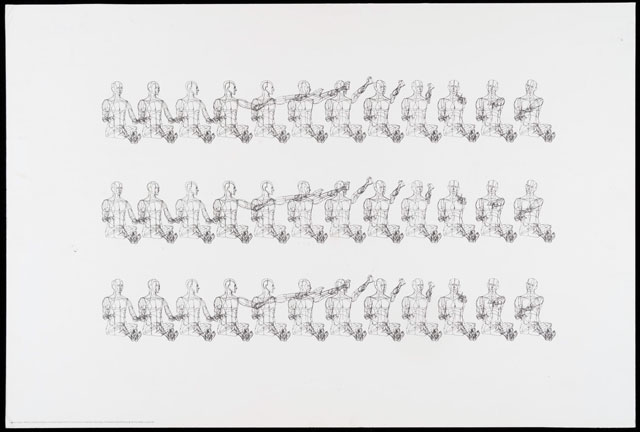

William Fetter. Human Figures, Boeing Computer Graphics 1968. Given by the Computer Arts Society, supported by System Simulation Ltd, London. © Victoria & Albert Museum London.

One of the earliest places in the US to think about computer use in a visual sense was Boeing aircraft company, around 1960. The designer William Fetter was the first to coin the term computer graphics to describe the work he produced there – diagrams for potential seating positions of pilots in cockpits. Often considered the first computer-aided drawing of the human figure, Fetter’s work featured in CS and a print of his “Boeing Man” was produced as part of the Motif Editions print portfolio published in connection with CS. This portfolio was a set of seven lithographs by different artists and also included two works by the Computer Technique Group (made at the IBM Scientific Data Centre in Tokyo), plus works by Charles Csuri and James Shaffer, Maughan S Mason, Donald K Robbins and Kerry Strand.

Another early pioneer in computer graphics and drawing was Ivan Sutherland, who devised in 1963 what was essentially the first graphical interface, a drawing system he called Sketchpad. Sutherland’s work was considerably in advance of anything developed before: he was far-sighted in devising commands users of such a system might need. These included the ability to “undo” things, copying, cutting and pasting, and he foresaw the idea of layering pictures.

Much pioneering work in image-making – graphics and film animation in particular – took place at Bell Telephone Laboratories Inc from 1962 by Ken Knowlton, A Michael Noll, Ed Zajac, Lillian Schwartz and others. The exhibition of plotter drawings by Noll and Béla Julesz at the Howard Wise Gallery in New York City in 1965was the earliest such show in the US. Engineers from Bell Labs come together with artists and composers to found EAT (Experiments in Art and Technology), which helped to break down barriers between disciplines by utilising new equipment such as video projects, wireless sound transmission and Doppler sonar. This followed the performance art event 9 Evenings: Theatre and Engineering in New York in 1966, which involved artists John Cage and Robert Rauschenberg, among many others, and numerous engineers including Klüver. The group also travelled to Japan as part of Expo ’70.

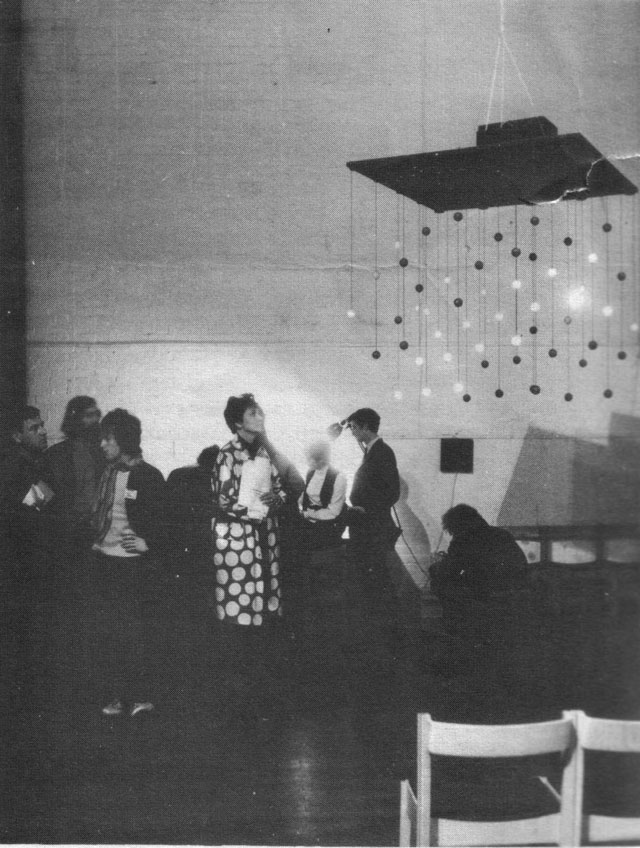

Following the success of CS, The Computer Arts Society (CAS) was founded in 1968, by three individuals who knew Reichardt well – cybernetician George Mallen, ICL programmer Alan Sutcliffe (both participants in CS) and John Lansdown, an architect and pioneer of computer-aided drawing systems. A special interest group of the British Computer Society, CAS was unique at the time as a practitioner-run organisation. It helped to foster computer arts activity by providing a network of support, exhibition opportunities, workshops, events and lectures and, on occasion, funding. International branches existed in the US and Holland. Its bulletin PAGE was initially edited by Gustav Metzger (another exhibitor at CS), featured work by major British and international computer artists, and hosted some fundamental discussions as to the aims and nature of computer art. CAS’s inaugural exhibition Event One, held at the Royal College of Art in 1969, was interdisciplinary, incorporating architecture, sculpture, theatre, graphics, music, poetry, film, performance and dance. As a collaboration of artists and programmers, it was to forecast the future activities of CAS.4

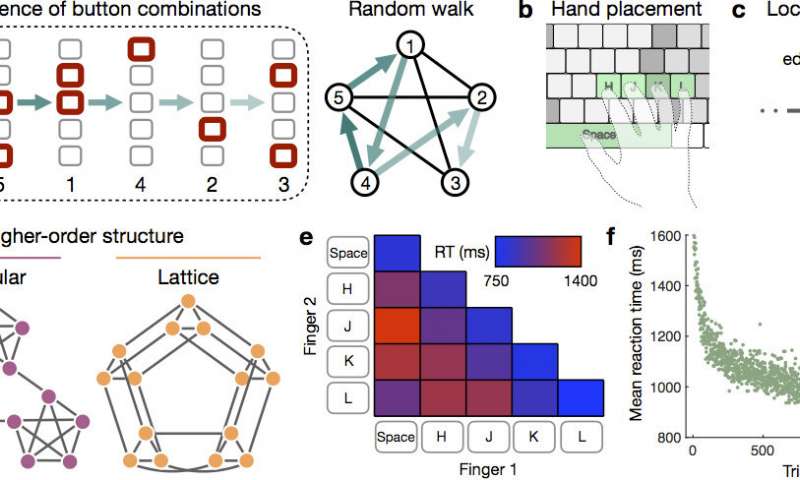

Event One, 1969. Installation view. Photograph: Peter Hunot, Courtesy of the Computer Arts Society.

Into the 70s, in part due to a reorganisation of the art education system in the UK, artists within art schools began to be able to access digital computing technology for the first time. This was made possible largely by the creation of polytechnics, which concentrated expensive resources into fewer, but larger multidisciplinary centres. The first ones were designated in 1967 and many art schools were amalgamated into them. This was a unique period in which art students could learn to program. Important early centres include Coventry, Middlesex and Leicester, as well as the postgraduate programme at the Slade School of Art. These provided not only education and training but, in some cases, career incubation, employment, research facilities and networking opportunities. The character of British computer arts of the 70s is shaped by these unique issues of access within art schools.5

Post CS, books began to be published in this field, often by artist-practitioners. These helped to give practical guidance as well as defining a place within art history. Notable titles include Herbert Franke’s Computer Graphics, Computer Art (1971), Ruth Leavitt’s Artist and Computer (1976) and Jonathan Benthall’s Science & Technology in Art Today (1972). One the first theory books to describe the contemporary history was published the same year as CS. Jack Burnham’s Beyond Modern Sculpture: The Effects of Science and Technology on the Sculpture of This Century was followed in 1970 by his exhibition Software, Information Technology: Its New Meaning for Art at the Jewish Museum in New York, predicated on the idea of software as a metaphor for art.

By the early 80s, computing technology had changed radically. It was no longer imperative to construct one’s own hardware. Proprietary software packages were becoming widely available and user-friendly systems were gaining ground; financially, too, the costs decreased. Although many continued to program, the rise of software meant that it was no longer necessary to write code to achieve many similar effects. This led to new avenues of exploration. Within the realm of digital painting, innovations allowed new techniques of layering, digital collage and printing, using, first, paintbox type systems, and later commercial brands such as Photoshop and now the iPad, most famously used today by David Hockney.

The field continues to expand. The contemporary use of gaming engines, scenery-generating software and animation and modelling software allows artists to create moving image and immersive environments like never before. For example, Kelly Richardson’s recent exhibition The Weather Makers at Dundee Contemporary Arts Scotland showed this to great effect.

Kelly Richardson, Leviathan, 2011, 3 channel HD video, commissioned by Artpace San Antonio.

Mat Collishaw. Thresholds (VR view), 2017. 8.5 x 6 x 2.5 m. Courtesy Mat Collishaw.

Developments in virtual reality are also proving of interest. In 2017, Mat Collishaw’s Thresholds, at Somerset House, reimagined a Fox Talbot photography exhibition to great acclaim.Traditional portraitist Jonathan Yeo is now using 3D scanning to produce self-portraits and VR to draw and then create sculpture via 3D printing. Yeo is currently showing at the Royal Academy’s From Life (billed as “from pencil and paper to Virtual Reality”) featuring artists who use traditional media alongside those who engage with emerging technologies such as HTC Vive and Tilt Brush by Google (as used by Yeo).

The making of Jonathan Yeo’s virtual self-portrait, in legendary sculptor Eduoardo Paolozzi’s former studio © Jonathan Yeo Studio 2017.

The cross-disciplinary work of the experimental 60s has proved of lasting impact. Many of the pioneers’ ideas featured here became integrated into the mainstream. Much of the technology used by them has now become ubiquitous as we have become accustomed to rapid integration of newer and newer technologies into our daily lives. The interactive, participant-responsive aspect of early cyberart is now a major trend in contemporary new-media-based artwork. Taken for granted by artists now are ideas of freedom in the use of materials, as well as a manner of working that takes into account institutional processes and the relationship between artist and audience and material and environment. Artists publish on their own on the web, with their own documentation and ideas. Artist in residence programmes, informal and ad hoc in the 60s, are now formalised and common within institutions and corporations. Organisations including SIGGRAPH, Leonardo, ISEA, EVA, Ars Electronica, Lumen, Furtherfield, Kinetica and many others continue to champion work in this field.

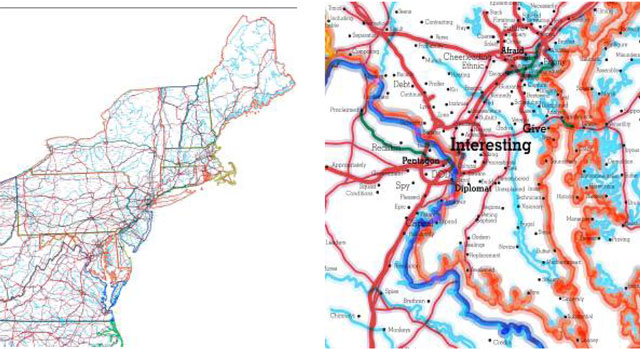

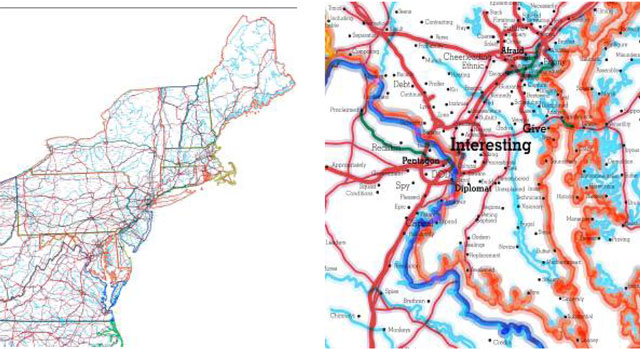

R. Luke DuBois, A More Perfect Union: USA, No. 4 (details), 2011/2017. Four inkjet prints on canvas, 57.5 x 120 in each. Courtesy bitforms gallery, New York. Included in Creativity and Collaboration: Revisiting Cybernetic Serendipity, National Academy of Sciences, Washington DC, 2018.

Cyberart, with its possibilities of immediacy, interactivity and multi-authorship can reach people outside the gallery system and engage new audiences in public places, whether that be sports arenas, music projects or on web- and mobile-based devices. Artists have always been early adopters of technology and those working today are adept at repurposing the vast quantity of data and information that surrounds us, whether to make statements of a sociopolitical or aesthetic nature. As the century progresses, our society faces an increasing number of challenges around issues of security, privacy, data-mining and possible abuses of power of big data collected by corporations and used for commercial ends. Art that is fundamentally engaged with technology has a crucial role to play now and in the future by raising questions, taking a critical stance or simply holding a mirror to our life and times.

References

1. This is Tomorrow by Laurence Alloway, Reyner Banham and David Lewis, published by Whitechapel Art Gallery, 1956, Section 12.

2. Telematic embrace: visionary theories of art, technology, and consciousness by Roy Ascott, edited by Edward A Shanken, University of California Press, 2003.

3. A Little-Known Story about a Movement, a Magazine and the Computer’s Arrival in Art: New Tendencies and Bit International, 1961–1973, edited by Margit Rosen, published by MIT, 2011.

4. The Fortieth Anniversary of Event One at the Royal College of Artby Catherine Mason.

5. A Computer in the Art Room: The Origins of British Computer Arts 1950-1980 by Catherine Mason, published by JJG, 2008.

• Catherine Mason is a board member of the Computer Arts Society and the author of A Computer in the Art Room: The Origins of British Computer Arts 1950-1980 and White Heat, Cold Logic (MIT, 2009) catherinemason.co.uk

Vincenzo Politi (

Vincenzo Politi (

Credit: GLEN E. FRIEDMAN © Photo Public Enemy New York City Early 1987

Credit: GLEN E. FRIEDMAN © Photo Public Enemy New York City Early 1987

You must be logged in to post a comment.